Functions and Closures — When Code Remembers Where It Was Born

Adding functions to our language reveals the deepest idea in computer science: a function is not just code. It's code plus the environment that gave it meaning.

var threshold = 10;

fun isAbove(n) {

return n > threshold;

}

threshold = 1000;

print isAbove(5);What does this print? true or false?

If you said false — because threshold is 1000 when the function runs — you understand something important. The function doesn't snapshot the environment at definition time. It closes over the environment. It holds a reference, not a copy. When threshold changes, the function sees the change.

This is a closure. And building one from scratch reveals something that took me years to appreciate about programming languages: a function is not just a block of code. It's code plus the world it was born into.

What we're building

As we explored in the previous chapter about building the interpreter, our language can evaluate expressions, execute statements, manage scoped variables, and run complete programs. But it can't do the one thing that separates a real programming language from a calculator: abstraction.

You can't name a computation. You can't parameterize behavior. You can't pass logic as a value. Everything is concrete, immediate, one-shot.

Functions fix all of that. Here's what we need to add:

Function declarations:

fun add(a, b) { return a + b; }Function calls:

add(3, 4)→ evaluates to7Return statements:

returnexits the function with a valueClosures: functions that capture variables from their enclosing scope

This is the largest extension we've made to the language. It touches the grammar, the lexer (new keywords), the parser (new syntax), and the interpreter (new execution model). But the core insight fits in a single sentence: a function is an object that, when called, creates a new environment and runs its body inside it.

The grammar changes

Four new rules join the grammar from the earlier chapter where we defined the language's formal structure:

funDecl = "fun" IDENTIFIER "(" parameters? ")" block ;

parameters = IDENTIFIER ( "," IDENTIFIER )* ;

returnStmt = "return" expression? ";" ;

call = primary ( "(" arguments? ")" )* ;

arguments = expression ( "," expression )* ;And statement gains two new alternatives:

statement = varDecl | funDecl | returnStmt | exprStmt

| printStmt | ifStmt | whileStmt | block ;The lexer needs two new keywords: fun and return. That's a two-line change to the keyword dictionary we built earlier:

// In Scanner — add to Keywords dictionary

["fun"] = TokenType.Fun,

["return"] = TokenType.Return,And two new entries in the TokenType enum: Fun and Return.

New AST nodes

The parser produces three new node types. A function declaration, a return statement, and a call expression:

public abstract record Stmt

{

// ... existing nodes ...

public record FunDecl(Token Name, List<Token> Parameters, List<Stmt> Body) : Stmt;

public record ReturnStmt(Token Keyword, Expr? Value) : Stmt;

}

public abstract record Expr

{

// ... existing nodes ...

public record Call(Expr Callee, Token Paren, List<Expr> Arguments) : Expr;

}FunDecl stores the function's name, its parameter tokens, and the body as a list of statements. ReturnStmt holds the return keyword token (for error reporting) and an optional return value. Call stores the expression being called (usually a variable, but could be any expression that evaluates to a callable), the closing parenthesis token (for error reporting on arity mismatches), and the argument list.

Parsing functions

The parser needs three additions. First, recognizing function declarations at the statement level:

private Stmt Declaration()

{

if (Match(TokenType.Fun)) return FunDeclaration();

if (Match(TokenType.Var)) return VarDeclaration();

return Statement();

}

private Stmt.FunDecl FunDeclaration()

{

var name = Consume(TokenType.Identifier, "Expected function name.");

Consume(TokenType.LeftParen, "Expected '(' after function name.");

var parameters = new List<Token>();

if (!Check(TokenType.RightParen))

{

do

{

if (parameters.Count >= 255)

throw new ParseException(Peek(), "Cannot have more than 255 parameters.");

parameters.Add(Consume(TokenType.Identifier, "Expected parameter name."));

} while (Match(TokenType.Comma));

}

Consume(TokenType.RightParen, "Expected ')' after parameters.");

Consume(TokenType.LeftBrace, "Expected '{' before function body.");

var body = BlockStatements();

return new Stmt.FunDecl(name, parameters, body);

}Second, parsing return statements:

private Stmt Statement()

{

if (Match(TokenType.Return)) return ReturnStatement();

// ... rest of existing statement parsing ...

}

private Stmt.ReturnStmt ReturnStatement()

{

var keyword = Previous();

Expr? value = !Check(TokenType.Semicolon) ? Expression() : null;

Consume(TokenType.Semicolon, "Expected ';' after return value.");

return new Stmt.ReturnStmt(keyword, value);

}Third, parsing function calls. This is a left-recursive rule — add(1)(2) should parse as calling the result of add(1) with argument 2. We handle this by treating calls as a postfix operation on primary expressions:

private Expr Call()

{

var expr = Primary();

while (Match(TokenType.LeftParen))

{

var arguments = new List<Expr>();

if (!Check(TokenType.RightParen))

{

do

{

if (arguments.Count >= 255)

throw new ParseException(Peek(), "Cannot have more than 255 arguments.");

arguments.Add(Expression());

} while (Match(TokenType.Comma));

}

var paren = Consume(TokenType.RightParen, "Expected ')' after arguments.");

expr = new Expr.Call(expr, paren, arguments);

}

return expr;

}The while loop makes this work with chained calls. The 255-argument limit is arbitrary but practical — it matches what the JVM and CLR impose, and nobody writes functions with 256 parameters.

The callable interface

Before the interpreter can call functions, it needs to know what "callable" means. Not every value can be called — only functions (and later, potentially classes). We define an interface:

public interface ILoxCallable

{

int Arity { get; }

object? Call(Interpreter interpreter, List<object?> arguments);

}Two members. Arity returns the number of parameters the function expects. Call executes the function with the given arguments and returns a result. The interpreter is passed in so the function body can call back into Evaluate and Execute.

The interface is tiny. Two members. But it's the seam where data becomes behavior — where a value stored in a variable can suddenly do something.

The function object

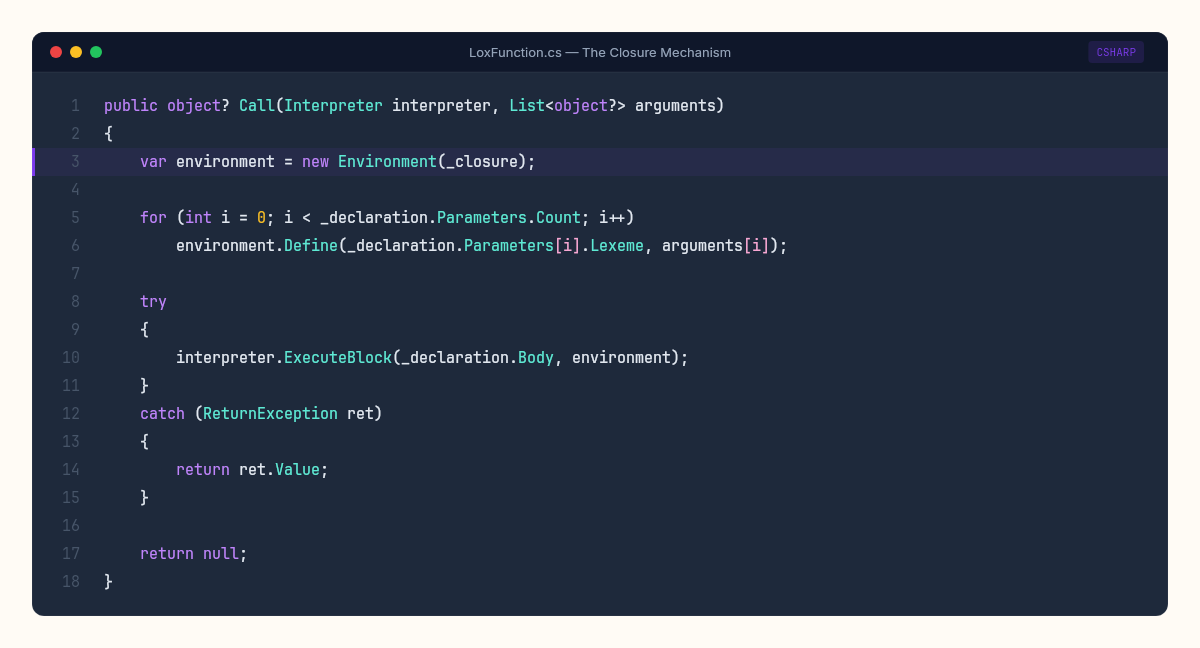

Closures happen at exactly this point. A LoxFunction wraps a function declaration together with the environment that was active when the function was defined:

public class LoxFunction : ILoxCallable

{

private readonly Stmt.FunDecl _declaration;

private readonly Environment _closure;

public LoxFunction(Stmt.FunDecl declaration, Environment closure)

{

_declaration = declaration;

_closure = closure;

}

public int Arity => _declaration.Parameters.Count;

public object? Call(Interpreter interpreter, List<object?> arguments)

{

var environment = new Environment(_closure);

for (int i = 0; i < _declaration.Parameters.Count; i++)

environment.Define(_declaration.Parameters[i].Lexeme, arguments[i]);

try

{

interpreter.ExecuteBlock(_declaration.Body, environment);

}

catch (ReturnException ret)

{

return ret.Value;

}

return null;

}

public override string ToString() => $"<fn {_declaration.Name.Lexeme}>";

}Read the Call method carefully. It creates a new environment whose parent is the closure — not the current environment at the call site. Then it binds the arguments to the parameter names in that new environment. Then it executes the body.

This is the entire mechanism of closures. When a function is defined, we store the current environment as its closure. When the function is called, we don't use the caller's environment — we use the captured one. The function remembers where it was born.

In 1936, Alonzo Church formalized this idea as the lambda calculus — a system where functions are the only primitive. No numbers, no booleans, no control flow. Just functions that take arguments and return results. Church proved that this is enough for any computation. Every number can be encoded as a function. Every boolean can be a function. Even if/else can be a function that takes two arguments and returns one of them.

What we've built is not lambda calculus. But the core operation — capturing an environment at definition time and using it at call time — is exactly what Church described. The lambda calculus doesn't just predate computers. It predates Turing machines. Church published his paper months before Turing published his. The function came first.

Church encoding — functions all the way down

Church's claim sounds impossible at first. Functions are enough for all computation? No numbers? No booleans? No if statements?

Here's the trick. You can encode booleans as functions. True is a function that takes two arguments and returns the first. False is a function that takes two arguments and returns the second:

// Church booleans — true and false as functions

// True = takes two args, returns first

// False = takes two args, returns second

fun TRUE(a) { fun inner(b) { return a; } return inner; }

fun FALSE(a) { fun inner(b) { return b; } return inner; }

// IF is just function application

fun IF(condition) {

fun then(thenBranch) {

fun else(elseBranch) {

return condition(thenBranch)(elseBranch);

}

return else;

}

return then;

}Look at IF. It takes a condition (which is itself a function — either TRUE or FALSE), then a "then" branch and an "else" branch. It calls the condition with both branches. If the condition is TRUE, it returns the first argument — the then branch. If FALSE, the second — the else branch. Conditional logic, built from nothing but function application.

Numbers work the same way. Church encoded zero as a function that takes another function and applies it zero times. One applies it once. Two applies it twice. Addition becomes "apply the function this many more times." Multiplication is function composition repeated.

Nobody writes production code this way. That's not the point. The point is the proof: if you have functions, closures, and nothing else, you can encode any data structure and any computation. Our little language can express Church encodings because it has closures — a function like TRUE returns an inner function that closes over a. That's the completeness claim made concrete. Not theoretical. Running.

Return as exception

There's an odd pattern in the Call method: we catch a ReturnException. This is how return statements work. A return can appear deep inside nested blocks and loops. It needs to unwind the entire call stack back to the function boundary. Exception handling gives us this for free:

public class ReturnException : Exception

{

public object? Value { get; }

public ReturnException(object? value) : base()

{

Value = value;

}

}In the interpreter's Execute method, return throws this exception:

case Stmt.ReturnStmt s:

object? returnValue = s.Value is not null ? Evaluate(s.Value) : null;

throw new ReturnException(returnValue);Using exceptions for control flow feels wrong. It's the kind of thing code reviewers flag. But here it's exactly the right tool: return is a non-local jump, and exceptions are how C# handles non-local jumps. The alternative — threading a "should return" flag through every recursive call — is more complex and more error-prone. Sometimes the pragmatic solution is the correct one.

Interpreting declarations and calls

Two additions to the interpreter's Execute and Evaluate methods. Function declaration creates a LoxFunction and stores it in the environment:

case Stmt.FunDecl s:

var function = new LoxFunction(s, _environment);

_environment.Define(s.Name.Lexeme, function);

break;And function calls evaluate the callee, check that it's callable, verify arity, and invoke:

private object? EvaluateCall(Expr.Call expr)

{

var callee = Evaluate(expr.Callee);

var arguments = expr.Arguments.Select(Evaluate).ToList();

if (callee is not ILoxCallable callable)

throw new RuntimeError(expr.Paren, "Can only call functions.");

if (arguments.Count != callable.Arity)

throw new RuntimeError(expr.Paren,

$"Expected {callable.Arity} arguments but got {arguments.Count}.");

return callable.Call(this, arguments);

}Notice: arguments are evaluated left-to-right before the call happens. This is eager evaluation. In a lazy language, arguments would be wrapped in thunks — unevaluated expressions passed to the function and only evaluated when used. That's a different design choice with different trade-offs. We chose eager because it's simpler to implement, simpler to reason about, and matches what C# developers expect.

Proving it works

A program that exercises everything:

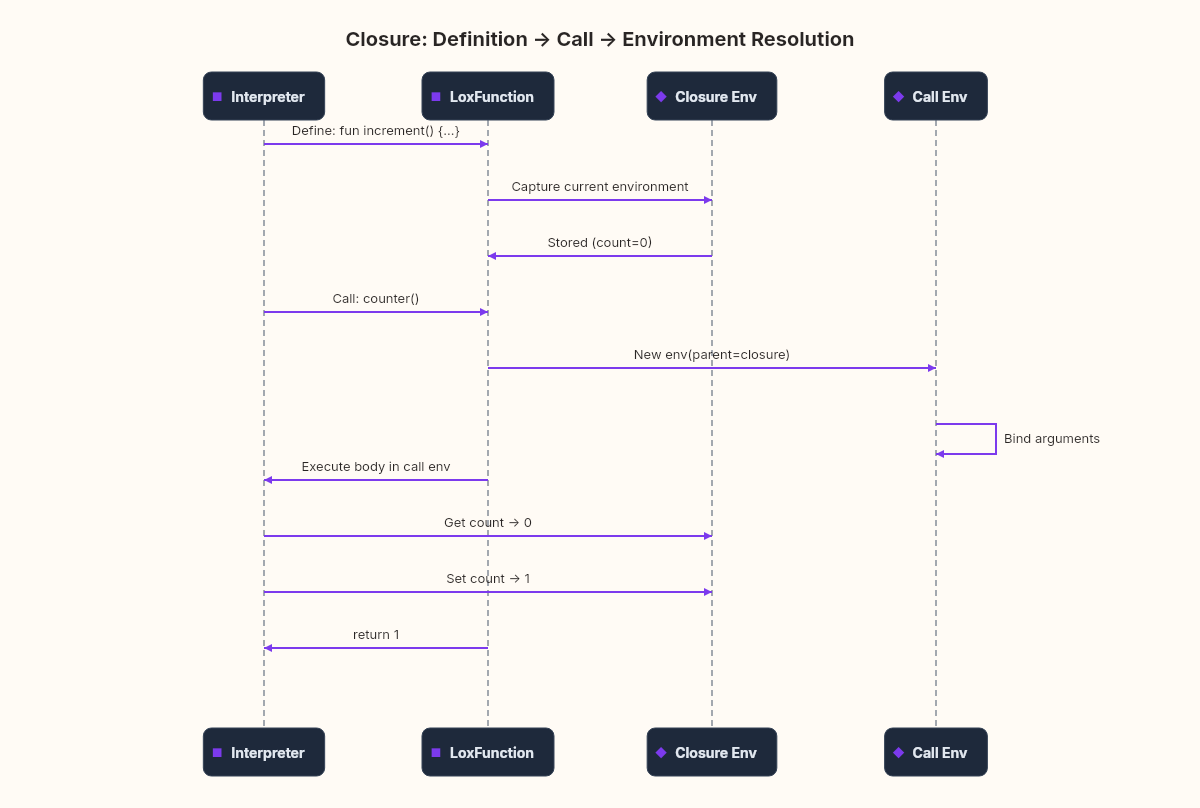

fun makeCounter() {

var count = 0;

fun increment() {

count = count + 1;

return count;

}

return increment;

}

var counter = makeCounter();

print counter();

print counter();

print counter();Output:

1

2

3makeCounter returns increment, which closes over the count variable. Each call to counter() modifies the same count through the captured environment. The variable survives after makeCounter returns because the closure holds a reference to the environment that contains it.

This is the pattern behind iterators, event handlers, module systems, and half of functional programming. And we built it in about 100 lines of C#.

Here's a second test — nested closures three levels deep:

fun makeAdder(x) {

fun addX(y) {

fun addXY(z) {

return x + y + z;

}

return addXY;

}

return addX;

}

var add5 = makeAdder(5);

var add5and10 = add5(10);

print add5and10(20);Output:

35makeAdder returns addX, which closes over x. addX returns addXY, which closes over both y and x — because its environment's parent is the environment that captured x. Three nested scopes, three captured variables, one correct result. The environment chain handles arbitrary depth without any special cases. The same mechanism that works for one level of nesting works for ten.

Where closures bite back

Functions make the language genuinely useful. They also make it harder to understand.

Closures create invisible state. A function can modify variables that no longer appear in any visible scope. Debugging a closure-heavy program means tracing environment chains that exist only at runtime — there's no syntax that tells you which variables a function captures. You have to read the body and mentally walk the scope chain.

The ReturnException pattern breaks stack traces. If someone wraps our interpreter in a try/catch, return exceptions will leak through unless we're careful. Production interpreters handle this more robustly.

And we still have no tail-call optimization. A recursive function that calls itself a thousand times creates a thousand C# stack frames. For deep recursion, our interpreter will throw a StackOverflowException before the Lox-lite program gets to report its own error.

Every feature you add to a language makes some programs possible and all programs harder to reason about. Functions are worth it. But the cost is real.

The closure debugging problem

There's a specific gotcha that every JavaScript developer has encountered at least once, and our language has it too. Consider this:

var callbacks = [];

var i = 0;

while (i < 3) {

fun capture() { print i; }

// imagine we stored capture somewhere

i = i + 1;

}If we could collect all three capture functions and call them later, what would they print? The intuitive answer is 0, 1, 2 — one for each iteration. The actual answer is 3, 3, 3.

All three closures capture the same environment, and that environment contains the same i. They don't snapshot the value of i at the moment they're created. They hold a reference to the environment that holds i. By the time any of them runs, the loop has finished and i is 3.

This is not a bug. It's a direct consequence of how closures work: they close over the environment, not over values. The environment is a living thing — it changes, and every closure that references it sees the changes. If we wanted each closure to capture a different value, we'd need to create a new scope per iteration — a new environment with its own copy of i:

var i = 0;

while (i < 3) {

fun makeCallback(n) {

fun callback() { print n; }

return callback;

}

var cb = makeCallback(i);

// cb now closes over n, not i

i = i + 1;

}Each call to makeCallback creates a fresh environment with its own n, bound to the current value of i. The returned callback closes over that fresh environment. Problem solved — but only if you understand the mechanism well enough to know it was a problem in the first place.

This is the trade-off with closures in a nutshell. They're powerful because they capture live environments. They're confusing for exactly the same reason. The state they hold is invisible — there's no syntax that tells you which variables a function has captured, or whether those variables are still being mutated somewhere else. You have to read the code and mentally walk the scope chain.

What Church knew

In 1936, Church didn't have a computer. He had pencil and paper, and he was trying to answer a question about the foundations of mathematics: what does it mean for something to be computable?

His answer was functions. Not machines, not algorithms, not procedures — functions. A function that takes a function and returns a function. He showed that this single mechanism, applied recursively, can encode any computation that any machine could ever perform.

We haven't proved that here. But we've built something that demonstrates it: a language where functions are values, where they remember their environment, where they can return other functions that remember their environment. The counter that counts. The closure that closes. The abstraction that abstracts.

Next, we'll step back from building and ask what we've actually learned — not just about languages, but about the nature of the tools we use every day without thinking about how they work.