GET, SET, DEL — When a Dictionary Becomes a Database

Our TCP server speaks RESP. Now it needs memory. A ConcurrentDictionary, an expiration engine, and the discovery that the hardest part of a key-value store isn't storing — it's forgetting.

The first time a GET returns a value you stored with SET three minutes ago, something shifts. The bytes went into the server over a TCP connection. They sat in RAM across a process boundary. And now they came back, intact, through a completely different connection. Your program remembered something you told it — and you can feel the seam where "a dictionary in a process" becomes "a service that holds state."

That seam is what we're building today.

The TCP server we built ended with a server that could accept connections, parse RESP commands, and dispatch them — but every command hit a dead end. GET name returned an error because there was nowhere to get it from. SET name "Anto" had nowhere to put it.

Today we give our Redis clone a brain. By the end of this chapter, it will store values, retrieve them, delete them, expire them on a timer, and handle multiple data types. The dictionary becomes a database.

The store

The simplest possible implementation is exactly what you'd guess:

public class RedisStore

{

private readonly ConcurrentDictionary<string, RedisValue> _data = new();

public void Set(string key, RedisValue value)

{

_data[key] = value;

}

public RedisValue? Get(string key)

{

return _data.TryGetValue(key, out var value) ? value : null;

}

public bool Delete(string key)

{

return _data.TryRemove(key, out _);

}

public bool Exists(string key)

{

return _data.ContainsKey(key);

}

public int Count => _data.Count;

}We're using ConcurrentDictionary even though, as we discussed when exploring Redis's architecture, real Redis is single-threaded. Why? Because our server dispatches commands from multiple Task instances — one per client connection. Until we introduce a central dispatch channel (that's coming when we tackle Channels), multiple tasks will access the store concurrently. ConcurrentDictionary handles that correctly without explicit locking.

The RedisValue type is where things get interesting:

public class RedisValue

{

public RedisType Type { get; init; }

public string? StringValue { get; set; }

public List<string>? ListValue { get; set; }

public HashSet<string>? SetValue { get; set; }

public Dictionary<string, string>? HashValue { get; set; }

public DateTime? ExpiresAt { get; set; }

public bool IsExpired => ExpiresAt.HasValue && DateTime.UtcNow >= ExpiresAt.Value;

}

public enum RedisType

{

String,

List,

Set,

Hash

}Each key maps to a RedisValue that carries its type and the appropriate data structure. A string key holds a StringValue. A hash key holds a Dictionary<string, string>. This is the same tagged-union pattern we used in the interpreter — our Expr records carried different payloads depending on whether they were Literal, Binary, or Variable nodes. Different domain. Same architecture.

A hash table that stores hash tables. That's what Redis is at its core — recursion disguised as infrastructure.

Mapping keys to values — the cartography beneath

A hash function connects data structures to an unexpected field.

A hash function is a projection. It takes an input from a large space (all possible strings) and maps it to a small space (an array index). This is mathematically identical to what a cartographic projection does — taking points from the surface of a sphere (latitude/longitude) and mapping them to positions on a flat surface (x/y coordinates).

Every projection distorts. The Mercator projection preserves angles but distorts area — Greenland looks the size of Africa. The Peters projection preserves area but distorts shape. There is no perfect projection. This is a mathematical certainty: you cannot flatten a sphere without distortion. The same constraint applies to hash functions.

A hash function maps an infinite space to a finite one. Collisions are inevitable — two different keys will eventually map to the same index. The question isn't whether you'll get collisions, but how you handle them. .NET's Dictionary<TKey, TValue> uses chaining: each bucket holds a linked list of entries that hashed to the same index. When a collision occurs, the new entry is appended to the chain.

// Conceptually, what Dictionary<string, RedisValue> does:

// 1. Compute hash: "name".GetHashCode() → 842741795

// 2. Map to bucket: 842741795 % bucketCount → 3

// 3. Walk bucket 3's chain looking for key == "name"

// 4. Return the value or append to chain

// The hash function is the projection.

// The bucket array is the flat map.

// Collisions are the distortions.The load factor — the ratio of entries to buckets — determines how much distortion you get. At load factor 0.5, most buckets have zero or one entry. At load factor 2.0, most buckets have chains of 2+ entries, and every lookup requires walking a linked list. .NET resizes the bucket array when the load factor exceeds 1.0, doubling the number of buckets and rehashing every entry. This is expensive — O(n) — but it happens infrequently enough that the amortized cost per operation stays O(1).

Real Redis doesn't use .NET's Dictionary. It uses a custom hash table with incremental rehashing — when the table needs to grow, it doesn't rehash everything at once. It maintains two tables simultaneously and migrates entries a few at a time on each operation. This spreads the cost across thousands of operations instead of concentrating it in one spike.

We're not implementing incremental rehashing. ConcurrentDictionary handles resizing internally, and its performance is more than adequate for our scale. But knowing the difference matters — it's one of the reasons real Redis can guarantee sub-millisecond latency even during growth, while a naive dictionary can stall during rehash.

Expiration — the art of forgetting

Storing data is easy. Forgetting it on schedule is surprisingly hard.

When you call SET session:abc123 "user_data" EX 3600, you're telling Redis to store the value and automatically delete it after 3,600 seconds. This is the backbone of session management, rate limiting, and temporary caching.

The naive implementation would be a timer per key:

// Don't do this — one timer per key doesn't scale

var timer = new Timer(_ => store.Delete(key), null,

TimeSpan.FromSeconds(ttl), Timeout.InfiniteTimeSpan);With 100 keys, this is fine. With 1 million keys, you have 1 million active timers — each consuming memory and a slot in the timer queue. The overhead overwhelms the data.

Real Redis uses a two-strategy approach:

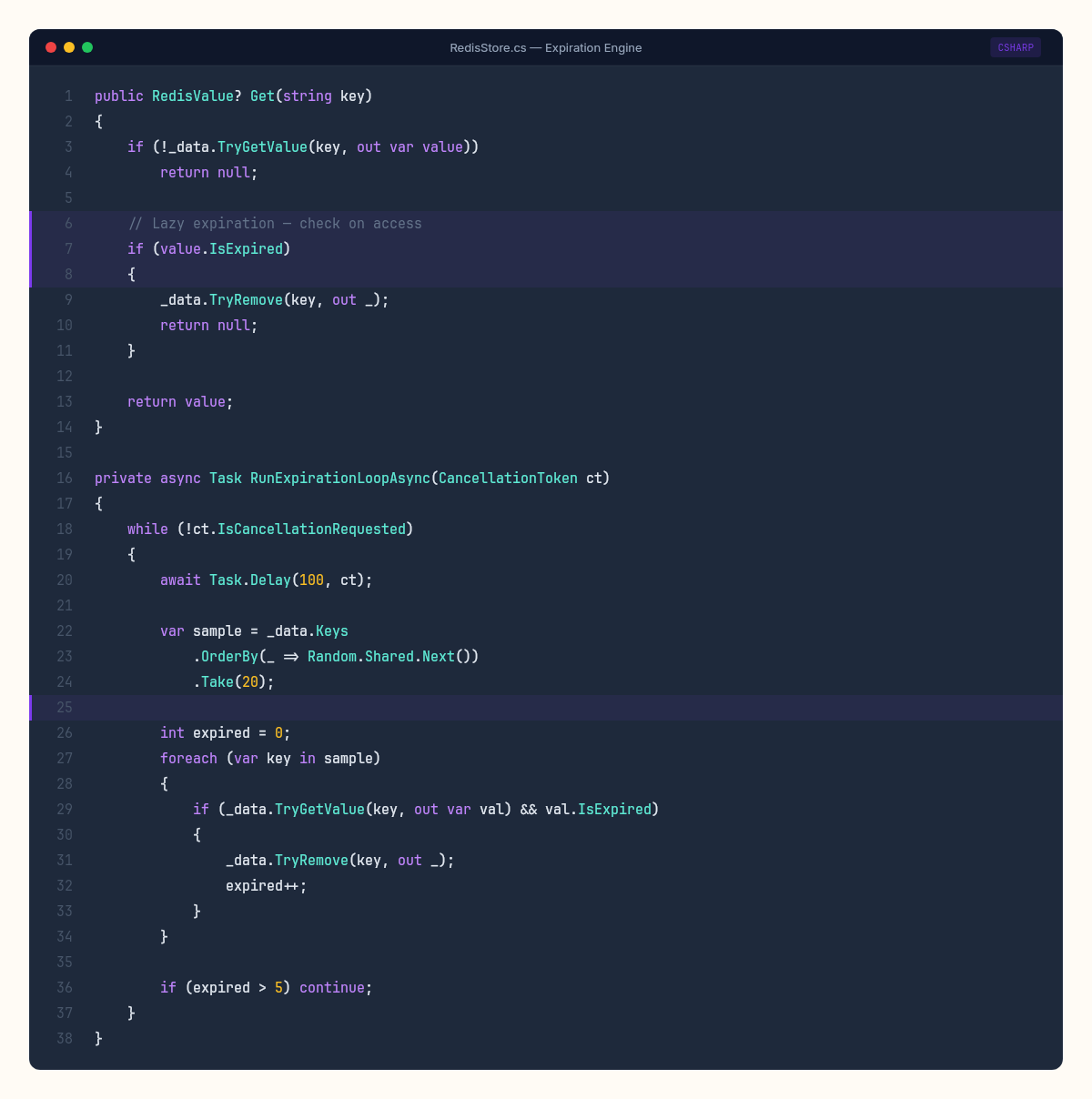

Lazy expiration: When a key is accessed, check if it's expired. If so, delete it and return null. This costs nothing for keys that are never accessed again — they just sit in memory until something finds them.

Active expiration: A background loop periodically samples random keys and deletes expired ones. Redis samples 20 keys at random, deletes any that are expired, and if more than 25% were expired, samples again immediately. This probabilistic approach keeps the expired-key ratio low without scanning the entire keyspace.

Let's implement both:

public class RedisStore

{

private readonly ConcurrentDictionary<string, RedisValue> _data = new();

private readonly CancellationTokenSource _cts = new();

public RedisStore()

{

_ = RunExpirationLoopAsync(_cts.Token);

}

public RedisValue? Get(string key)

{

if (!_data.TryGetValue(key, out var value))

return null;

// Lazy expiration

if (value.IsExpired)

{

_data.TryRemove(key, out _);

return null;

}

return value;

}

public void Set(string key, RedisValue value, TimeSpan? ttl = null)

{

if (ttl.HasValue)

value.ExpiresAt = DateTime.UtcNow + ttl.Value;

_data[key] = value;

}

private async Task RunExpirationLoopAsync(CancellationToken ct)

{

while (!ct.IsCancellationRequested)

{

await Task.Delay(100, ct); // Run 10 times per second

var sample = _data.Keys

.OrderBy(_ => Random.Shared.Next())

.Take(20);

int expired = 0;

foreach (var key in sample)

{

if (_data.TryGetValue(key, out var val) && val.IsExpired)

{

_data.TryRemove(key, out _);

expired++;

}

}

// If >25% expired, run again immediately

if (expired > 5)

continue;

}

}

}The probabilistic sampling is elegant. Instead of tracking every expiration time in a sorted data structure (which would be O(log n) per insertion), we trade precision for speed. Some expired keys will linger for up to a few hundred milliseconds before the background loop finds them. For session management and caching, this is perfectly acceptable. For financial transactions, it would not be.

Expiration is the one place where Redis deliberately chooses approximate correctness over exact correctness. The trade-off is time: scanning every key costs O(n), sampling 20 costs O(1).

Wiring commands to the store

Now we connect the RESP command dispatcher from the TCP server we built to our store:

string ProcessCommand(string[] command, RedisStore store)

{

var name = command[0].ToUpperInvariant();

return name switch

{

"PING" => "+PONG\r\n",

"SET" => HandleSet(command, store),

"GET" => HandleGet(command, store),

"DEL" => HandleDel(command, store),

"EXISTS" => HandleExists(command, store),

"TTL" => HandleTtl(command, store),

"KEYS" => HandleKeys(command, store),

"DBSIZE" => $":{store.Count}\r\n",

"ECHO" => FormatBulkString(command[1]),

_ => $"-ERR unknown command '{command[0]}'\r\n",

};

}

string HandleSet(string[] cmd, RedisStore store)

{

if (cmd.Length < 3)

return "-ERR wrong number of arguments for 'set' command\r\n";

var key = cmd[1];

var value = new RedisValue

{

Type = RedisType.String,

StringValue = cmd[2]

};

// Parse optional EX/PX arguments

TimeSpan? ttl = null;

for (int i = 3; i < cmd.Length - 1; i++)

{

switch (cmd[i].ToUpperInvariant())

{

case "EX":

ttl = TimeSpan.FromSeconds(int.Parse(cmd[i + 1]));

break;

case "PX":

ttl = TimeSpan.FromMilliseconds(int.Parse(cmd[i + 1]));

break;

}

}

store.Set(key, value, ttl);

return "+OK\r\n";

}

string HandleGet(string[] cmd, RedisStore store)

{

var value = store.Get(cmd[1]);

if (value == null)

return "$-1\r\n"; // RESP null bulk string

return FormatBulkString(value.StringValue ?? "");

}The $-1\r\n response for missing keys is RESP's null representation — a bulk string with length -1. It's the protocol's way of distinguishing "this key has an empty string" ($0\r\n\r\n) from "this key doesn't exist" ($-1\r\n). A small detail, but the kind of detail that real client libraries depend on.

Testing it live

With the store wired in, our server handles real Redis operations:

$ redis-cli -p 6379

127.0.0.1:6379> SET greeting "hello from C# Lab"

OK

127.0.0.1:6379> GET greeting

"hello from C# Lab"

127.0.0.1:6379> SET temp "expires soon" EX 5

OK

127.0.0.1:6379> TTL temp

(integer) 4

127.0.0.1:6379> GET temp # after 5 seconds

(nil)

127.0.0.1:6379> DBSIZE

(integer) 1

127.0.0.1:6379> DEL greeting

(integer) 1

127.0.0.1:6379> DBSIZE

(integer) 0The SET/GET cycle works. Expiration works — temp disappears after 5 seconds. DBSIZE reflects the live count. DEL removes keys and returns how many were deleted.

This is a functional key-value store. It accepts connections over TCP, speaks the Redis wire protocol, stores data in memory, and expires keys on schedule. A real Redis client library — not just redis-cli, but StackExchange.Redis, Lettuce, jedis — could connect to this server and perform basic operations without modification.

What the dictionary doesn't know

Our ConcurrentDictionary stores keys and values. It doesn't know about access patterns, memory pressure, or the relationship between keys.

Real Redis tracks additional metadata: last access time (for LRU eviction), memory usage per key (for MEMORY USAGE), encoding type (Redis stores small integers as actual integers, not strings, to save memory). When memory pressure hits the configured maxmemory limit, Redis can evict keys using LRU, LFU, random, or volatile-only policies.

Our store has no eviction policy. If you keep adding keys, it will grow until the process exhausts available memory and the OS kills it. That's the right trade-off for a learning project — eviction is a feature you add when you need it, and the implementation choices (LRU approximation, LFU decay, sampling-based eviction) each deserve their own chapter.

There's also no persistence. Everything in our ConcurrentDictionary lives in the process's heap memory. Kill the process and every key vanishes. Persistence will address this — append-only files and snapshots, each with their own cost on the speed-durability spectrum.

For now, our dictionary is a database that remembers everything it's told and forgets on schedule. It just can't survive a restart. That's the question the rest of this week is built around — how to make something fast also make it last.