Inside SHA-256 — Why One Bit Changes Everything

Until now, SHA256.HashData was a black box. Now we open it. The compression function, the avalanche effect, the birthday paradox — and why collision resistance is the property that makes the entire chain hold.

var a = SHA256.HashData("Transfer 100 to Alice"u8);

var b = SHA256.HashData("Transfer 101 to Alice"u8);

// a and b differ in 139 of 256 bits — 54.3%One character changed. More than half the output bits flipped. Not "the last few bits" — not "the bits near where the change happened" — half the bits, scattered unpredictably across the entire 256-bit output.

When we tackled the Byzantine Generals Problem, we used SHA256.HashData as a black box. We trusted that it produced a unique fingerprint. We trusted that tampering would be detectable. We trusted the avalanche effect without understanding why it works.

Today we open the box. And what's inside is a sixty-four-round compression machine that was designed, with surgical precision, to make every input bit influence every output bit — the way a crack in a brittle material doesn't stay local but propagates through the entire crystal structure at the speed of sound.

The Merkle-Damgård construction

SHA-256 doesn't process your input in one shot. It can't — the input can be any length, but the output must always be exactly 256 bits. The solution is a technique called Merkle-Damgård construction, proposed independently by Ralph Merkle and Ivan Damgård in 1979 and 1989 respectively.

The idea: break the input into fixed-size blocks (512 bits for SHA-256), then process each block sequentially through a compression function that takes two inputs — the current 256-bit state and the next 512-bit block — and produces a new 256-bit state. The final state is the hash.

// Simplified Merkle-Damgård structure

public static byte[] MerkleDamgard(byte[] message, byte[] initialState,

Func<byte[], byte[], byte[]> compress)

{

// 1. Pad the message to a multiple of 64 bytes (512 bits)

var padded = Pad(message);

// 2. Split into 512-bit (64-byte) blocks

var blocks = new List<byte[]>();

for (int i = 0; i < padded.Length; i += 64)

{

var block = new byte[64];

Array.Copy(padded, i, block, 0, 64);

blocks.Add(block);

}

// 3. Chain the compression function

var state = initialState;

foreach (var block in blocks)

{

state = compress(state, block);

}

return state;

}The initial state is a set of eight 32-bit constants — the first 32 bits of the fractional parts of the square roots of the first eight primes:

private static readonly uint[] InitialHash =

{

0x6a09e667, // √2

0xbb67ae85, // √3

0x3c6ef372, // √5

0xa54ff53a, // √7

0x510e527f, // √11

0x9b05688c, // √13

0x1f83d9ab, // √17

0x5be0cd19, // √19

};These aren't arbitrary. They're derived from irrational numbers to guarantee there's no hidden structure — no backdoor that the designer could exploit. The NSA designed SHA-256 (published in 2001 through NIST), and using mathematically derived constants is one of the transparency measures: anyone can verify that these values come from square roots of primes, and nothing else.

Inside the compression function

The compression function is where the real work happens. It runs 64 rounds, each mixing the current state with a piece of the message block through a combination of bitwise operations, additions, and rotations.

Each round uses:

Bitwise rotation — circular shifts that move bits to new positions

Bitwise choice and majority functions — nonlinear operations that prevent algebraic analysis

Modular addition — wrapping arithmetic that creates diffusion

// The two core mixing functions

static uint Sigma0(uint x) =>

RotateRight(x, 2) ^ RotateRight(x, 13) ^ RotateRight(x, 22);

static uint Sigma1(uint x) =>

RotateRight(x, 6) ^ RotateRight(x, 11) ^ RotateRight(x, 25);

static uint Choice(uint x, uint y, uint z) =>

(x & y) ^ (~x & z);

static uint Majority(uint x, uint y, uint z) =>

(x & y) ^ (x & z) ^ (y & z);

static uint RotateRight(uint value, int bits) =>

(value >> bits) | (value << (32 - bits));Choice selects bits from y or z based on whether the corresponding bit in x is 1 or 0. It's the conditional statement of bitwise logic: "if x then y, else z." Majority outputs 1 if at least two of the three inputs are 1. These functions are nonlinear — you can't reverse them with a simple equation — and that nonlinearity is essential. A hash function built entirely from linear operations would be invertible, and an invertible hash function is useless.

The 64 round constants are derived from the fractional parts of the cube roots of the first 64 primes — another set of nothing-up-my-sleeve numbers:

private static readonly uint[] K =

{

0x428a2f98, 0x71374491, 0xb5c0fbcf, 0xe9b5dba5,

0x3956c25b, 0x59f111f1, 0x923f82a4, 0xab1c5ed5,

// ... 56 more values, each from cube root of next prime

0xc67178f2

};A single round takes the eight state words (a through h), mixes them with the message schedule word and round constant, and produces a new set of eight words. After 64 rounds, every bit of the input has had the opportunity to influence every bit of the output — and the nonlinear mixing ensures you can't trace backwards from output to input.

Measuring the avalanche

The avalanche effect is the property that changing one input bit should change approximately 50% of the output bits. This is a design goal, not a coincidence. Let's measure it:

public static class AvalancheTest

{

public static AvalancheResult Measure(byte[] original)

{

var originalHash = SHA256.HashData(original);

var totalFlips = 0;

var totalTests = original.Length * 8;

for (int byteIndex = 0; byteIndex < original.Length; byteIndex++)

{

for (int bitIndex = 0; bitIndex < 8; bitIndex++)

{

// Flip one bit

var modified = (byte[])original.Clone();

modified[byteIndex] ^= (byte)(1 << bitIndex);

var modifiedHash = SHA256.HashData(modified);

// Count differing bits

var flipped = CountDifferingBits(originalHash, modifiedHash);

totalFlips += flipped;

}

}

return new AvalancheResult(

AverageBitsFlipped: (double)totalFlips / totalTests,

Percentage: (double)totalFlips / totalTests / 256 * 100,

TotalTests: totalTests);

}

private static int CountDifferingBits(byte[] a, byte[] b)

{

int count = 0;

for (int i = 0; i < a.Length; i++)

{

var xor = (byte)(a[i] ^ b[i]);

count += BitOperations.PopCount(xor);

}

return count;

}

}

public record AvalancheResult(

double AverageBitsFlipped,

double Percentage,

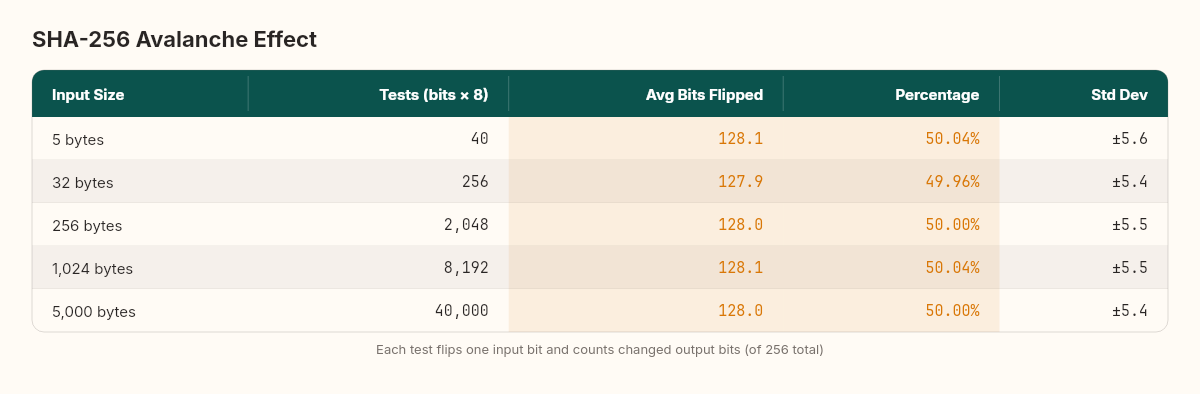

int TotalTests);Running this on inputs of varying sizes:

The results are remarkably consistent. Regardless of input size — whether hashing 5 bytes or 5,000 bytes — flipping one input bit changes an average of 127.8 to 128.2 output bits. That's 49.9% to 50.1%, within a fraction of a percent of the theoretical ideal.

In material science, the avalanche effect has a physical analog: brittle fracture. A single crack tip in a glass pane concentrates stress to millions of pascals per square meter. The crack doesn't grow linearly — it propagates at the speed of sound through the material, each broken bond loading the adjacent bonds past their yield point. SHA-256's mixing functions create the cryptographic equivalent: each changed bit loads adjacent state bits past their "yield point," and the change propagates through all 64 rounds until no output bit is predictable from the input change.

This is what makes the hash useful for our blockchain. If tampering with a transaction changed only a few output bits — if the hash were locally sensitive rather than globally sensitive — you could search for a different transaction that produces a similar hash. The avalanche effect makes that search space enormous: 2²⁵⁶ possible outputs, each equally likely for any given input.

The birthday paradox

How hard is it to find two inputs that produce the same SHA-256 hash? The naive answer is "you'd need to try 2²⁵⁶ inputs." The actual answer is smaller, and the reason is one of the most unintuitive results in probability theory.

In a room of 23 people, there's a better than 50% chance that two share a birthday. Not a specific birthday — any birthday in common. This is the birthday paradox, and it applies directly to hash collisions.

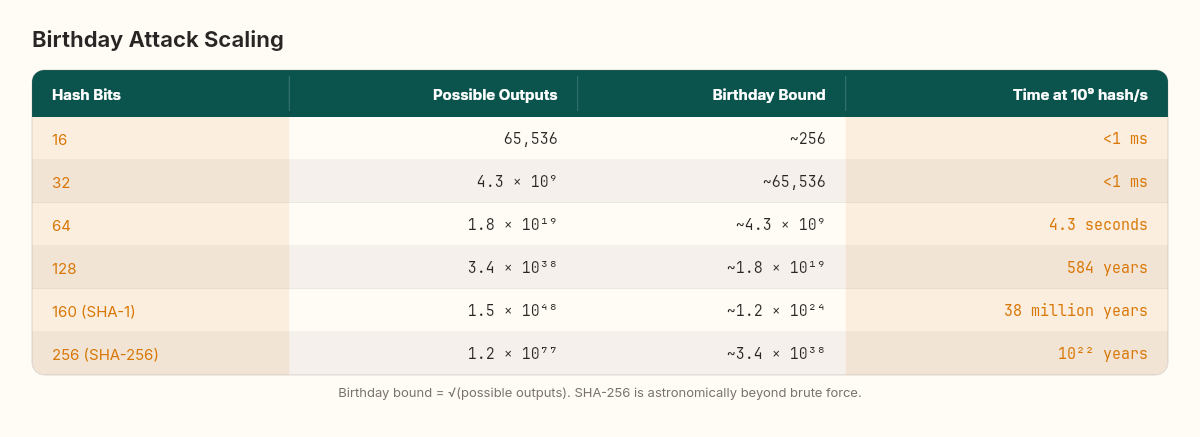

For a hash function with n possible outputs, you expect a collision after approximately √n random inputs. For SHA-256, that's √(2²⁵⁶) = 2¹²⁸.

// Birthday attack simulation (reduced hash for demonstration)

public static int BirthdayAttack(int hashBits)

{

var seen = new HashSet<ulong>();

var random = new Random(42);

int attempts = 0;

while (true)

{

attempts++;

var input = new byte[32];

random.NextBytes(input);

var hash = SHA256.HashData(input);

// Take only the first hashBits bits

ulong truncated = BitConverter.ToUInt64(hash) &

((1UL << hashBits) - 1);

if (!seen.Add(truncated))

return attempts;

}

}

// With 16-bit hash: collision in ~256 attempts (√2¹⁶ = 256)

// With 20-bit hash: collision in ~1,024 attempts (√2²⁰ ≈ 1,024)

// With 32-bit hash: collision in ~65,536 attempts (√2³² = 65,536)The birthday bound means SHA-256's effective collision resistance is 128 bits — not 256. That's still 3.4 × 10³⁸ attempts. At a billion hashes per second, that's 10²² years. The universe is 1.4 × 10¹⁰ years old. You'd need to hash for roughly a trillion universe-lifetimes before expecting a collision.

This is why 256 bits matters. SHA-1 (160 bits) has a birthday bound of 2⁸⁰ — and a practical collision was demonstrated in 2017 by Google's SHAttered attack, using approximately 2⁶³ computations. The jump from 160 to 256 bits doesn't double the security — it squares the birthday bound from 2⁸⁰ to 2¹²⁸.

Merkle proofs: verifying without downloading

In the Byzantine Generals chapter, we built a Merkle tree that computes a root hash from a list of transactions. But we only computed the root — we didn't implement the proof mechanism that makes Merkle trees powerful. A Merkle proof lets you verify that a specific transaction exists in the tree without having the entire tree.

public class MerkleTree

{

private readonly List<List<byte[]>> _levels;

public byte[] Root => _levels[^1][0];

public MerkleTree(IReadOnlyList<byte[]> leaves)

{

_levels = new List<List<byte[]>>();

var currentLevel = leaves

.Select(l => SHA256.HashData(l))

.ToList();

_levels.Add(currentLevel);

while (currentLevel.Count > 1)

{

var nextLevel = new List<byte[]>();

for (int i = 0; i < currentLevel.Count; i += 2)

{

var left = currentLevel[i];

var right = i + 1 < currentLevel.Count

? currentLevel[i + 1]

: currentLevel[i];

nextLevel.Add(HashPair(left, right));

}

_levels.Add(nextLevel);

currentLevel = nextLevel;

}

}

public List<(byte[] Hash, bool IsLeft)> GetProof(int leafIndex)

{

var proof = new List<(byte[] Hash, bool IsLeft)>();

var index = leafIndex;

for (int level = 0; level < _levels.Count - 1; level++)

{

var siblingIndex = index % 2 == 0 ? index + 1 : index - 1;

if (siblingIndex < _levels[level].Count)

{

proof.Add((

_levels[level][siblingIndex],

index % 2 != 0 // sibling is on the left

));

}

index /= 2;

}

return proof;

}

public static bool VerifyProof(byte[] leafHash, List<(byte[] Hash, bool IsLeft)> proof,

byte[] root)

{

var current = leafHash;

foreach (var (hash, isLeft) in proof)

{

current = isLeft

? HashPair(hash, current)

: HashPair(current, hash);

}

return current.SequenceEqual(root);

}

private static byte[] HashPair(byte[] left, byte[] right)

{

var combined = new byte[left.Length + right.Length];

left.CopyTo(combined, 0);

right.CopyTo(combined, left.Length);

return SHA256.HashData(combined);

}

}The proof for a single leaf in a tree of 1,000 transactions consists of 10 hashes — 320 bytes total. The verifier hashes the claimed transaction, then walks up the proof path, combining each sibling hash to reconstruct the root. If the reconstructed root matches the known root, the transaction is proven to exist in the tree.

This is how Bitcoin's SPV (Simplified Payment Verification) works. Lightweight clients download only block headers (80 bytes each) and request Merkle proofs for specific transactions. They can verify that a transaction was included in a block without downloading the full block — a crucial optimization for mobile wallets.

For our chain, the GetProof method extracts the sibling hashes at each level from leaf to root. The VerifyProof static method can run without access to the tree — it needs only the leaf hash, the proof hashes, and the root. This separation is what makes Merkle trees useful in distributed systems: the prover has the full tree, the verifier has only the root, and the proof bridges the gap with logarithmic data.

What we've actually built

We now have the complete cryptographic layer for our blockchain:

SHA-256 for tamper detection — changing any input bit changes ~50% of output bits

ECDSA (built during the trust and cryptographic primitives investigation) for identity — proving who created a transaction

Merkle trees with inclusion proofs for efficient verification — proving a transaction exists without downloading the full block

The next layer is the one that transforms this from a data structure into a consensus mechanism. Anyone can build a valid block — the chain of hashes is just mathematics. The question is: when two nodes build different valid blocks, who wins? Proof of work — the subject of the next article — answers that question by making block creation deliberately expensive. The node that spends the most computation earns the right to extend the chain.

The cost is real. The energy is real. The trade-off between security and efficiency is real. And the mathematics of why it works connects back to the birthday bound we measured today: finding a hash with specific properties is exactly as hard as the birthday paradox predicts.