The CQRS Blueprint — Commands, Events, and Two Models That Don't Talk

The theory chapter explored why reads and writes want different things. Now we build the separation: command handlers, domain events, a write store and a read store that never share a line of code.

public class WriteDbContext : DbContext { /* normalized, rich, EF Core */ }

public class ReadDbContext { /* denormalized, flat, Dapper */ }

// They share a connection string.

// They share nothing else.That's the core of what we're building today. Two data access layers, pointing at the same PostgreSQL instance, with completely different schemas, different query strategies, and different consistency guarantees. The write side knows about aggregates, domain events, and business rules. The read side knows about flattened DTOs and fast queries. Neither imports a single type from the other.

The theory chapter explored why CQRS separates reads and writes. This chapter is the how — concrete C# code, every design decision visible, every trade-off named.

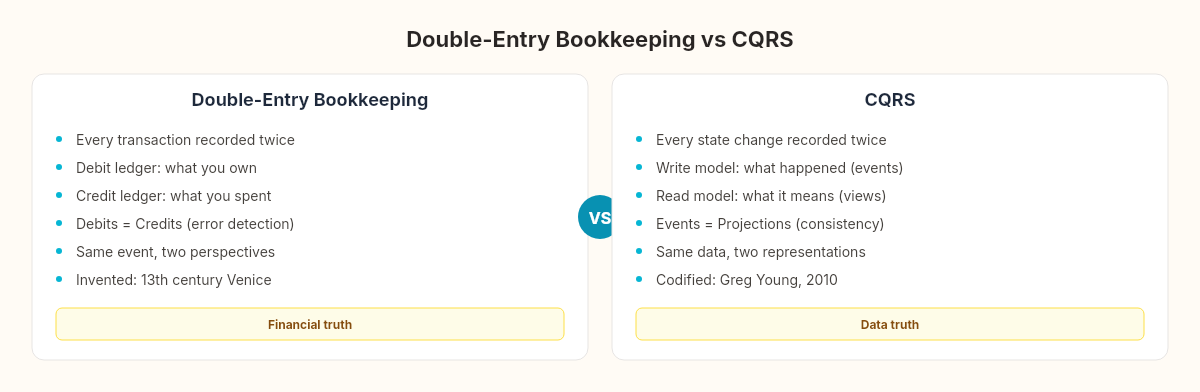

Double-entry bookkeeping

In 1494, Luca Pacioli published Summa de Arithmetica, which included the first comprehensive description of double-entry bookkeeping. The system was already centuries old by then — Venetian merchants had been using it since at least the 13th century — but Pacioli codified its rules.

The core principle: every financial transaction is recorded twice. A debit in one account and a credit in another. Buy inventory for cash? Debit the Inventory account, credit the Cash account. The two entries describe the same event from different perspectives. The Inventory ledger tells you what you own. The Cash ledger tells you what you spent. Neither is complete alone. Together, they provide a full picture — and the fact that debits must always equal credits gives you a built-in error-detection mechanism.

CQRS applies the same insight to data. The write model records what happened (commands executed, events emitted). The read model records what it means (aggregated views, denormalized summaries). Same events, two representations, each optimized for its purpose.

The command side

A command is an instruction to change state. Not a request — an instruction. It carries all the data needed to execute the change, and the handler decides whether to accept or reject it.

public record CreateOrderCommand(

Guid CustomerId,

string ShippingCity,

string ShippingAddress,

List<OrderItemDto> Items

);

public record OrderItemDto(

Guid ProductId,

int Quantity,

decimal UnitPrice

);The command is a plain data object. No behavior, no validation logic, no database references. It's a message — the equivalent of Pacioli's journal entry before it hits the ledger.

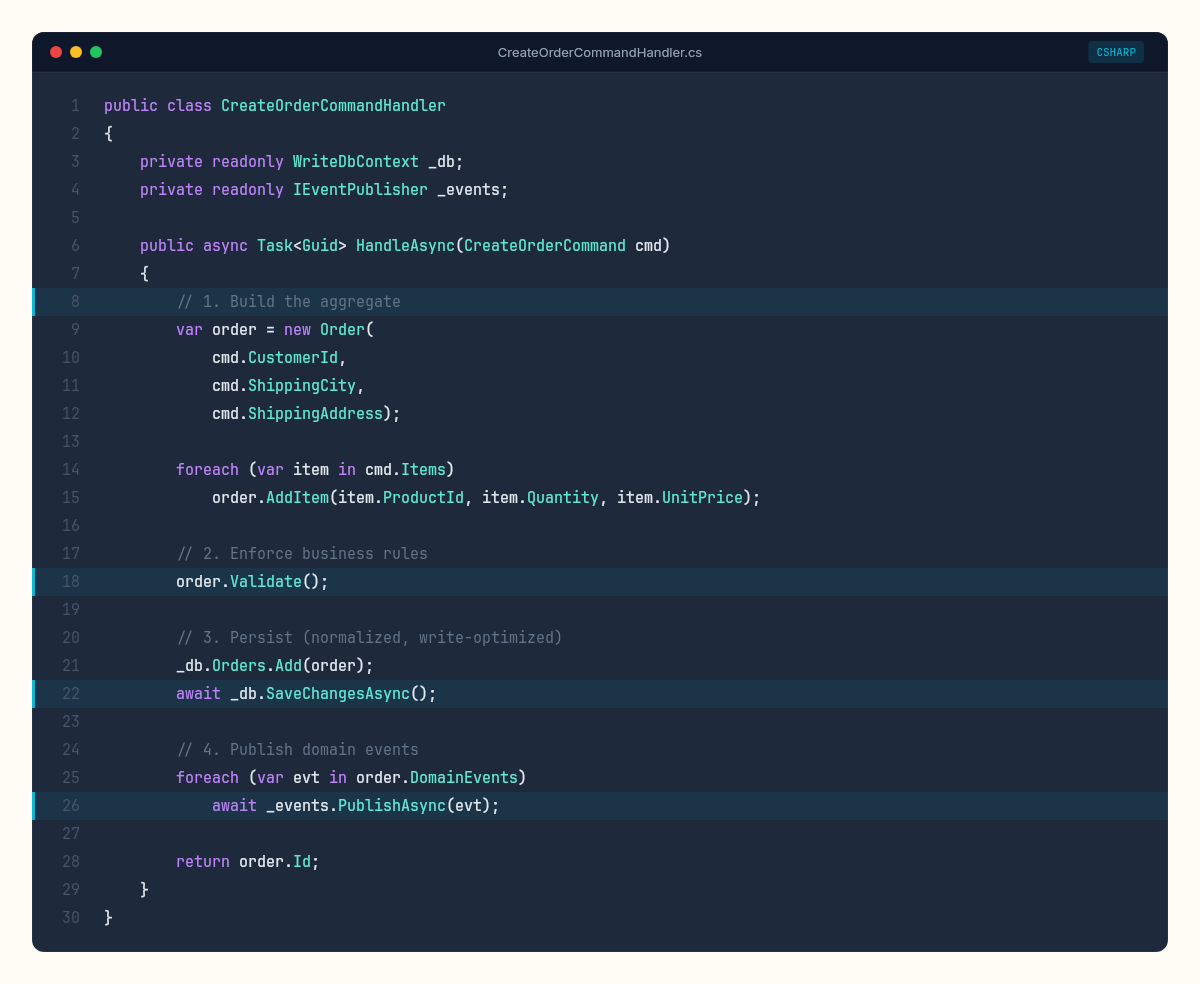

The handler is where behavior lives:

public class CreateOrderCommandHandler

{

private readonly WriteDbContext _db;

private readonly IEventPublisher _events;

public CreateOrderCommandHandler(

WriteDbContext db, IEventPublisher events)

{

_db = db;

_events = events;

}

public async Task<Guid> HandleAsync(CreateOrderCommand cmd)

{

// 1. Build the aggregate

var order = new Order(

cmd.CustomerId,

cmd.ShippingCity,

cmd.ShippingAddress);

foreach (var item in cmd.Items)

order.AddItem(item.ProductId, item.Quantity, item.UnitPrice);

// 2. Enforce business rules

order.Validate();

// 3. Persist (normalized, write-optimized)

_db.Orders.Add(order);

await _db.SaveChangesAsync();

// 4. Publish domain events

foreach (var evt in order.DomainEvents)

await _events.PublishAsync(evt);

return order.Id;

}

}Three things to notice. First, the handler orchestrates a sequence: build, validate, persist, publish. It doesn't return the order to the caller — it returns an ID. The caller will read order details through the query side, not through the command response.

Second, domain events are collected on the aggregate during the operation and published after persistence succeeds. If SaveChangesAsync throws, no events are published. If event publishing fails, the data is still persisted — we handle that mismatch with the outbox pattern from earlier in the series.

Commands change state. Queries read state. The moment you let a command return rich data, you've collapsed the separation.

Third, the Order aggregate owns its own rules:

public class Order

{

public Guid Id { get; private set; } = Guid.NewGuid();

public Guid CustomerId { get; private set; }

public string ShippingCity { get; private set; }

public string ShippingAddress { get; private set; }

public OrderStatus Status { get; private set; } = OrderStatus.Pending;

public decimal TotalAmount { get; private set; }

public DateTime CreatedAt { get; private set; } = DateTime.UtcNow;

private readonly List<OrderItem> _items = new();

public IReadOnlyList<OrderItem> Items => _items;

private readonly List<IDomainEvent> _domainEvents = new();

public IReadOnlyList<IDomainEvent> DomainEvents => _domainEvents;

public Order(Guid customerId, string city, string address)

{

CustomerId = customerId;

ShippingCity = city;

ShippingAddress = address;

_domainEvents.Add(new OrderCreated(Id, customerId));

}

public void AddItem(Guid productId, int qty, decimal price)

{

if (qty <= 0) throw new DomainException("Quantity must be positive");

_items.Add(new OrderItem(productId, qty, price));

TotalAmount = _items.Sum(i => i.Quantity * i.UnitPrice);

_domainEvents.Add(new OrderItemAdded(Id, productId, qty, price));

}

public void Validate()

{

if (!_items.Any())

throw new DomainException("Order must have at least one item");

if (TotalAmount < 10m)

throw new DomainException("Minimum order amount is 10.00");

}

public void Confirm()

{

if (Status != OrderStatus.Pending)

throw new DomainException($"Cannot confirm order in {Status} state");

Status = OrderStatus.Confirmed;

_domainEvents.Add(new OrderConfirmed(Id, TotalAmount));

}

}The aggregate collects domain events as side effects of behavior. OrderCreated when constructed, OrderItemAdded when items are added, OrderConfirmed when the state transitions. These events are the bridge between the write model and the read model — the double entry that keeps both ledgers in sync.

The write store

The WriteDbContext is a standard EF Core context, normalized for write correctness:

public class WriteDbContext : DbContext

{

public DbSet<Order> Orders => Set<Order>();

public DbSet<OrderItem> OrderItems => Set<OrderItem>();

protected override void OnModelCreating(ModelBuilder builder)

{

builder.Entity<Order>(e =>

{

e.HasKey(o => o.Id);

e.Property(o => o.TotalAmount).HasPrecision(18, 2);

e.HasMany(o => o.Items).WithOne()

.HasForeignKey("OrderId");

e.Ignore(o => o.DomainEvents);

});

builder.Entity<OrderItem>(e =>

{

e.HasKey(i => i.Id);

e.Property(i => i.UnitPrice).HasPrecision(18, 2);

});

}

}Normalized. Relationships. Change tracking. Navigation properties. Everything EF Core is good at — maintaining a correct, consistent, relationship-rich model that enforces constraints at the database level.

The domain events

Events are the connective tissue. Each one records what happened, not what to do:

public interface IDomainEvent

{

Guid AggregateId { get; }

DateTime OccurredAt { get; }

}

public record OrderCreated(

Guid AggregateId,

Guid CustomerId

) : IDomainEvent

{

public DateTime OccurredAt { get; init; } = DateTime.UtcNow;

}

public record OrderItemAdded(

Guid AggregateId,

Guid ProductId,

int Quantity,

decimal UnitPrice

) : IDomainEvent

{

public DateTime OccurredAt { get; init; } = DateTime.UtcNow;

}

public record OrderConfirmed(

Guid AggregateId,

decimal TotalAmount

) : IDomainEvent

{

public DateTime OccurredAt { get; init; } = DateTime.UtcNow;

}Records are ideal here — immutable, value-equal, with built-in ToString() for debugging. The AggregateId links every event to its source aggregate. The OccurredAt timestamp enables ordering.

The read side

Now the other ledger. The read model is denormalized, pre-computed, and optimized for the queries the UI actually needs.

public class OrderSummary

{

public Guid OrderId { get; set; }

public Guid CustomerId { get; set; }

public string CustomerName { get; set; } = "";

public string ShippingCity { get; set; } = "";

public decimal TotalAmount { get; set; }

public int ItemCount { get; set; }

public string Status { get; set; } = "Pending";

public DateTime CreatedAt { get; set; }

public DateTime LastUpdatedAt { get; set; }

}No navigation properties. No relationships. No ICollection<OrderItem>. Just the flat shape the dashboard needs. The ItemCount is pre-calculated. The CustomerName is pre-joined. The TotalAmount is pre-aggregated. Every field exists because a query needs it.

The read store uses Dapper — no change tracking, no entity mapping, no overhead:

public class OrderReadStore

{

private readonly NpgsqlConnection _connection;

public OrderReadStore(string connectionString)

{

_connection = new NpgsqlConnection(connectionString);

}

public async Task<IEnumerable<OrderSummary>> GetRecentOrdersAsync(

int limit = 50)

{

return await _connection.QueryAsync<OrderSummary>(

"""

SELECT order_id, customer_id, customer_name,

shipping_city, total_amount, item_count,

status, created_at, last_updated_at

FROM order_summaries

ORDER BY created_at DESC

LIMIT @Limit

""",

new { Limit = limit });

}

public async Task UpsertAsync(OrderSummary summary)

{

await _connection.ExecuteAsync(

"""

INSERT INTO order_summaries

(order_id, customer_id, customer_name, shipping_city,

total_amount, item_count, status, created_at, last_updated_at)

VALUES

(@OrderId, @CustomerId, @CustomerName, @ShippingCity,

@TotalAmount, @ItemCount, @Status, @CreatedAt, @LastUpdatedAt)

ON CONFLICT (order_id) DO UPDATE SET

total_amount = @TotalAmount,

item_count = @ItemCount,

status = @Status,

last_updated_at = @LastUpdatedAt

""",

summary);

}

}The UpsertAsync is how projections write to the read store — we build that projection engine in the next article in the series about CQRS edge cases. For now, notice the ON CONFLICT ... DO UPDATE — the read model must be idempotent, because events can be replayed.

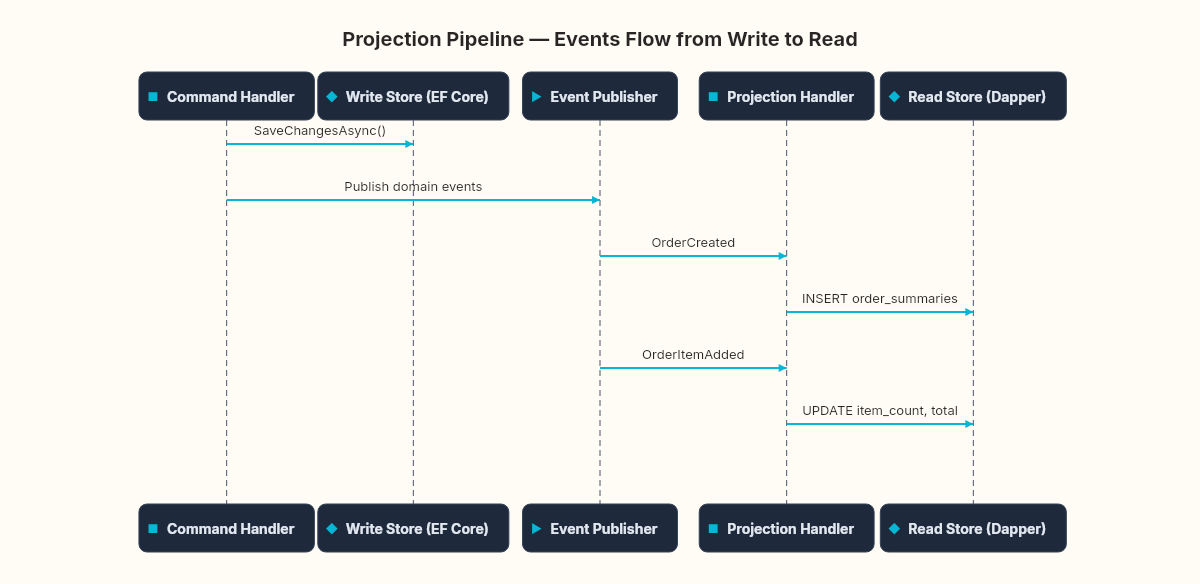

The projection handler

The bridge between write and read — an event handler that transforms domain events into read model updates:

public class OrderSummaryProjection

{

private readonly OrderReadStore _readStore;

public OrderSummaryProjection(OrderReadStore readStore)

{

_readStore = readStore;

}

public async Task HandleAsync(IDomainEvent evt)

{

switch (evt)

{

case OrderCreated e:

await _readStore.UpsertAsync(new OrderSummary

{

OrderId = e.AggregateId,

CustomerId = e.CustomerId,

Status = "Pending",

CreatedAt = e.OccurredAt,

LastUpdatedAt = e.OccurredAt

});

break;

case OrderItemAdded e:

var existing = await _readStore.GetByIdAsync(e.AggregateId);

if (existing != null)

{

existing.ItemCount++;

existing.TotalAmount += e.Quantity * e.UnitPrice;

existing.LastUpdatedAt = e.OccurredAt;

await _readStore.UpsertAsync(existing);

}

break;

case OrderConfirmed e:

var order = await _readStore.GetByIdAsync(e.AggregateId);

if (order != null)

{

order.Status = "Confirmed";

order.TotalAmount = e.TotalAmount;

order.LastUpdatedAt = e.OccurredAt;

await _readStore.UpsertAsync(order);

}

break;

}

}

}Each event updates the read model incrementally. OrderCreated inserts a new summary row. OrderItemAdded increments the count and adjusts the total. OrderConfirmed updates the status. The projection is the translation layer — Pacioli's clerk who records the same transaction in both ledgers.

The command dispatcher

One more piece: the pipeline that routes commands to their handlers:

public interface ICommandDispatcher

{

Task<TResult> DispatchAsync<TResult>(ICommand<TResult> command);

}

public class CommandDispatcher : ICommandDispatcher

{

private readonly IServiceProvider _provider;

public CommandDispatcher(IServiceProvider provider)

{

_provider = provider;

}

public async Task<TResult> DispatchAsync<TResult>(

ICommand<TResult> command)

{

var handlerType = typeof(ICommandHandler<,>)

.MakeGenericType(command.GetType(), typeof(TResult));

dynamic handler = _provider.GetRequiredService(handlerType);

return await handler.HandleAsync((dynamic)command);

}

}This is a minimal dispatcher — no validation pipeline, no logging middleware, no retry policies. MediatR does all of that. We're hand-rolling it here to show that CQRS doesn't require a framework. It's like assembling a clock from gears before buying a Swiss one — you understand what the casing hides. The pattern is the separation, not the tooling.

What we have

Two complete data paths. A command enters through the dispatcher, reaches a handler that builds an aggregate, validates business rules, persists to the normalized write store, and publishes domain events. Those events reach projection handlers that update denormalized read models. Queries hit the read store directly through Dapper — no joins, no aggregation, no business logic.

The dashboard query from the previous article about CQRS theory — the 800ms monster with four joins and subqueries — becomes a single SELECT from a flat table. The response time drops from 800ms to 3ms. The write path stays as rich and rule-heavy as it needs to be.

Two complete data paths. Two ledgers. One source of truth that flows through domain events into purpose-built read models. Pacioli would recognize the architecture — different books, same transactions, each optimized for the questions it needs to answer.

The separation is clean on paper. Production will test how clean it stays.

Exercise: Add a CancelOrderCommand and OrderCancelled event. The handler should enforce a rule: orders can only be cancelled within 30 minutes of creation. The projection should update the OrderSummary.Status to "Cancelled". What happens if the OrderItemAdded projection hasn't finished yet when OrderCancelled arrives?