The Event Sourcing Verdict — When History Earns Its Storage Cost

EventStoreDB, Marten, and Axon compared. A decision framework for .NET teams. And the four-week composition that changes how patterns interact.

In an architecture review last year, someone asked the question that always surfaces eventually: "If event sourcing gives us a complete history, why wouldn't we use it everywhere?"

The room went quiet. Not because the question was naive — it wasn't. It was the right question, asked at the wrong altitude. The answer isn't about whether history is valuable. History is always valuable. The answer is about whether the cost of maintaining that history — schema versioning, projection rebuilds, replay latency, developer onboarding — is proportional to the value your domain extracts from it.

I've built three event-sourced systems over the past decade. One was the right call. One was defensible but expensive. The third was a mistake I spent eight months unwinding. The difference wasn't the pattern. It was the domain.

Reading the rings

Dendrochronologists read tree rings to reconstruct centuries of climate history. Each ring is an immutable record — one growing season, permanently encoded in wood. A narrow ring means drought. A dark band means fire. The sequence is append-only and unfalsifiable.

But dendrochronology isn't how most forestry works. If you need to know whether a tree is healthy today, you measure its canopy, check for disease, assess the soil. You don't core-sample every tree in the forest. Ring analysis is reserved for the questions that only history can answer: was there a drought in 1847? How did this species respond to the volcanic winter of 1816?

Event sourcing is the core sample. CRUD with a good audit log is the canopy inspection. Both are valid. The question is which questions your domain actually asks.

The decision framework

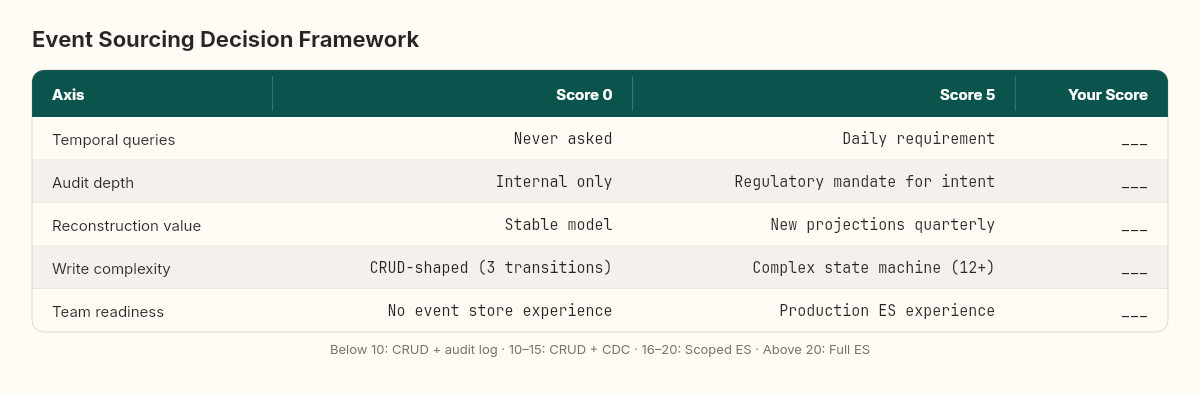

After three systems — one success, one expensive lesson, one rollback — I score domains on five axes before recommending event sourcing.

Temporal queries: Does the business ask "what was the state at time T?" If the answer is never or rarely, event sourcing solves a problem you don't have. Score: 0 (never) to 5 (daily).

Audit depth: Does regulation or compliance require knowing not just what changed but why it changed, with the original intent preserved? An audit log records facts. An event store records intent. Score: 0 (internal only) to 5 (regulatory mandate for intent).

Reconstruction value: Can you derive new insights by replaying old events through new projections? If your domain model is stable and unlikely to grow new read models, replay has no value. Score: 0 (stable model) to 5 (new projections quarterly).

Write complexity: How many distinct state transitions does your aggregate support? An aggregate with three transitions (Created, Updated, Deleted) doesn't need event sourcing. An order with twelve transition types across payment, fulfillment, returns, and disputes might. Score: 0 (CRUD-shaped) to 5 (complex state machine).

Team readiness: Has your team built and operated an event-sourced system before? This dimension is uncomfortable to score honestly, but it's the one that predicts failure most reliably. Score: 0 (no experience) to 5 (production experience with event stores).

Score interpretation:

Below 10: CRUD with audit log. You'll pay for event sourcing without extracting its value.

10–15: CRUD with change data capture. You get replay capability without the schema versioning tax.

16–20: Event sourcing with careful scope. Start with one bounded context, not the entire system.

Above 20: Event sourcing is the natural fit. The domain complexity justifies the infrastructure investment.

"The most expensive event-sourced system I've seen wasn't the one with the most events. It was the one where nobody ever queried the history."

To make this concrete: consider an e-commerce order system. Temporal queries? The finance team asks "what was the basket value before the discount was applied" weekly — score 3. Audit depth? PCI compliance requires transaction intent tracking — score 4. Reconstruction value? New dashboards every quarter for different departments — score 4. Write complexity? Twelve transition types across creation, payment, fulfillment, returns, and disputes — score 4. Team readiness? One engineer with EventStoreDB experience, others are learning — score 2. Total: 17. That lands in the "scoped event sourcing" band — event-source the order aggregate, keep the product catalog in CRUD, keep the user profile in CRUD.

Now consider a content management system. Temporal queries? Never — score 0. Audit depth? Internal only — score 1. Reconstruction value? The content model hasn't changed in two years — score 1. Write complexity? Created, updated, published, archived — score 1. Team readiness? Zero — score 0. Total: 3. Event sourcing here is a solution searching for a problem.

The gap between 17 and 3 is where architectural discipline lives. Both teams could implement event sourcing. Only one should.

Three approaches, honestly compared

The verdict isn't binary. There's a spectrum between "just use a database" and "event-source everything," and the middle options matter.

CRUD with audit log: Your entities live in normalized tables. A trigger or interceptor writes changes to an audit table with timestamps, user IDs, and before/after snapshots. You can answer "what changed" but not "why." Projection rebuilds are impossible — the audit log captures effects, not intent. But the developer experience is familiar, the tooling is mature, and every ORM on the market supports it. This is the right answer for the majority of domains.

CRUD with change data capture: Your entities live in tables, but a CDC pipeline (Debezium, the transaction log, or a custom poller) streams changes to a secondary store. You get replay capability and new projection creation without the schema versioning complexity of event sourcing. The trade-off: you're reconstructing intent from state diffs, which works for some domains and fails for others. If "price changed from 100 to 80" is sufficient (you don't need to distinguish between a discount, a correction, and a price match), CDC covers you.

Event sourcing: Your aggregate emits domain events that are the source of truth. Current state is derived, not stored. You get temporal queries, intent preservation, and unlimited projection creation. You pay for schema versioning, replay performance management, and a fundamentally different mental model that every developer on the team must internalize. This is the right answer when the domain's complexity genuinely lives in its transitions, not its current state.

The toolbox for .NET teams

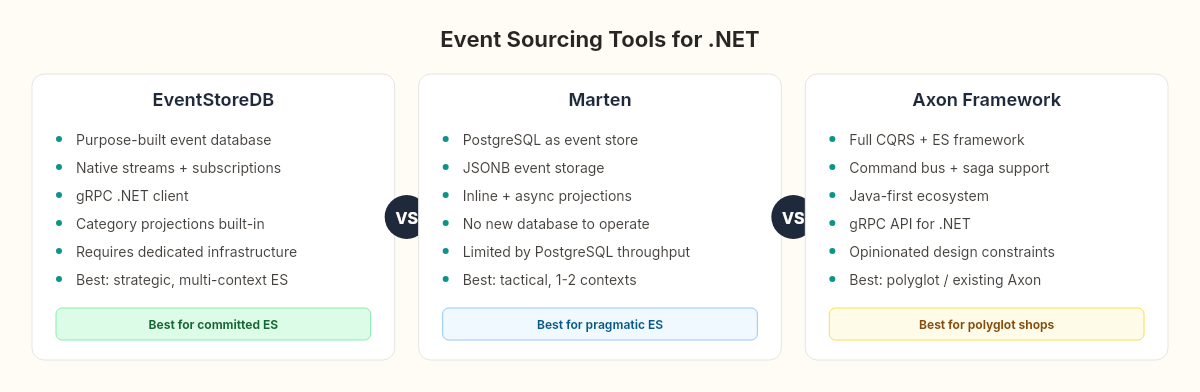

Three tools dominate the .NET event sourcing landscape. Each makes different trade-offs.

EventStoreDB is a purpose-built event database. It stores events in streams with built-in projections, subscriptions, and a category system that groups streams by aggregate type. The gRPC client for .NET is well-maintained, and the subscription model — catch-up subscriptions that track position automatically — reduces the projection infrastructure you need to build. The cost: it's another database to operate. Your team needs to understand stream design, projection management, and EventStoreDB's operational characteristics (compaction, scavenging, cluster topology). For teams committed to event sourcing as a primary persistence model, EventStoreDB is the most complete option.

Marten takes the opposite approach. It uses PostgreSQL as the event store, layering event sourcing semantics on top of a database your team already operates. Events are stored in a JSONB column. Projections are built using Marten's IProjection interface and can run inline (synchronous, within the same transaction) or asynchronously. The advantage is operational simplicity — no new database, no new deployment topology. The trade-off: you're limited by PostgreSQL's throughput characteristics for high-volume event streams, and you lose EventStoreDB's native stream subscription model. For teams that want event sourcing for one or two bounded contexts without adopting a new database, Marten is the pragmatic choice.

// Marten: event sourcing on PostgreSQL

var store = DocumentStore.For(opts =>

{

opts.Connection(connectionString);

opts.Projections.Add<OrderSummaryProjection>(ProjectionLifecycle.Async);

opts.Events.StreamIdentity = StreamIdentity.AsString;

});

await using var session = store.LightweightSession();

var stream = session.Events.StartStream<Order>(orderId,

new OrderCreated(orderId, customerId, DateTime.UtcNow),

new ItemAdded(orderId, "SKU-100", 2, 49.99m));

await session.SaveChangesAsync();Axon Framework is the most opinionated option. It provides a full CQRS + event sourcing framework with command buses, event handlers, saga management, and aggregate lifecycle built in. The .NET support exists through Axon Server's gRPC API, though the ecosystem is Java-first. For teams already using Axon Server (perhaps in a polyglot architecture), the integration is mature. For .NET-only teams starting fresh, the Java-centric documentation and community can be a friction point. Axon's strength is that it makes the correct patterns easy and the incorrect patterns hard — but that opinionation becomes a constraint when your domain doesn't fit Axon's assumptions.

The honest comparison:

For a .NET team making this decision today, the choice usually narrows to EventStoreDB vs Marten. Axon makes sense in polyglot shops or teams with existing Java-side Axon investment.

EventStoreDB when: event sourcing is a strategic commitment across multiple bounded contexts, you need native stream subscriptions and projections, and your team can absorb the operational overhead of a dedicated event database.

Marten when: event sourcing is tactical — one or two bounded contexts — and you want to avoid introducing a new database into your infrastructure. PostgreSQL is already there. Use it.

Four weeks, four patterns, one logbook

This is the moment where the Architecture Logbook pays off its structure.

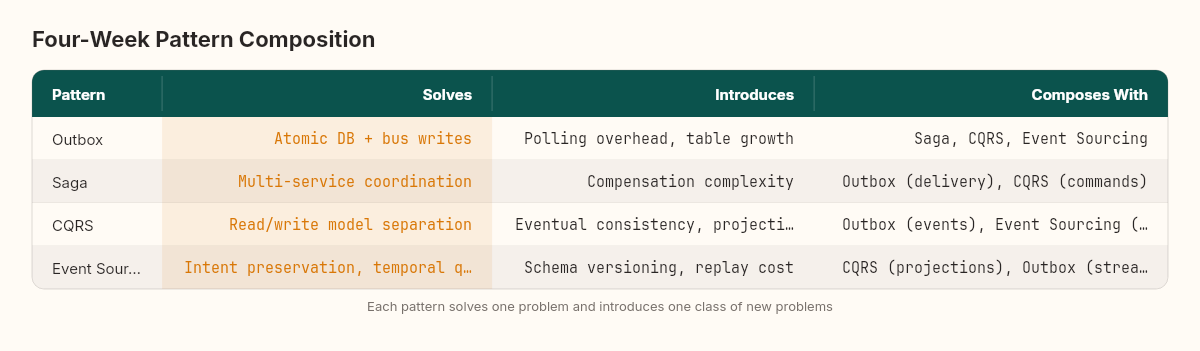

Over four weeks, we built four patterns that aren't independent. They compose — and the composition is where the real architectural decisions live.

The outbox guarantees that domain events reach the message bus atomically with database writes. Without it, every downstream pattern is built on unreliable foundations.

The saga consumes those events to coordinate multi-service business operations. It relies on the outbox's delivery guarantee to trigger compensation logic reliably.

CQRS separates the command side (where sagas and aggregates operate) from the query side (where read models serve the UI). Event sourcing's domain events become CQRS's integration events when projected into read models.

And event sourcing makes the events the source of truth — which means the outbox can read directly from the event stream, the saga can replay its history for debugging, and CQRS projections can be rebuilt from scratch without data loss.

The composition isn't symmetric. You can use an outbox without event sourcing. You can use CQRS without sagas. But event sourcing without CQRS is rare in practice — if events are your source of truth, you need projections for queries, and projections are the read side of CQRS.

In practice, the composition looks like this: a command arrives at the order aggregate. The aggregate emits events (event sourcing). Those events are appended to the event stream and simultaneously published via the outbox to the message bus. A saga orchestrator consumes the OrderConfirmed event and coordinates payment and fulfillment across services. Meanwhile, a projection handler reads the same events and updates a denormalized read model (CQRS read side) that the dashboard queries.

Each layer depends on the one below it. Remove the outbox, and the saga misses events. Remove event sourcing, and the projection can't rebuild from scratch. Remove CQRS, and the command side is fighting the query side for the same database resources. The patterns don't just coexist — they reinforce each other's guarantees.

But composition has a complexity budget. Every pattern you add increases the surface area for failures, the number of concepts your team must internalize, and the time it takes a new engineer to become productive. The four-pattern stack described above is appropriate for systems with genuine distributed complexity — multi-service coordination, regulatory audit requirements, and evolving read models. For a monolith serving a single team, the four-pattern stack is over-engineering by any honest measure.

"Each pattern solves one problem and introduces one class of new problems. The architecture logbook exists because the interaction between those new problems is where systems actually fail."

When not to use it

The hardest part of any verdict is admitting when the pattern you just spent a week building and measuring isn't the right answer.

Don't event-source aggregates with simple lifecycles. If your entity is created, occasionally updated, and eventually deleted — if the state machine has three or four transitions — the overhead of event serialization, schema versioning, and replay management outweighs the benefits. Use a database row. Add a ModifiedAt column. Move on.

Don't event-source when your team is learning distributed systems fundamentals. Event sourcing assumes comfort with eventual consistency, projection lag, and idempotent event handlers. If your team is still building confidence with asynchronous messaging, adding event sourcing amplifies every gap in that understanding.

Don't event-source for compliance alone. If the regulatory requirement is "show me what changed and when," an audit log or CDC pipeline satisfies it at a fraction of the operational cost. Event sourcing's value is reconstruction, not record-keeping.

And don't event-source everything. The boundary between event-sourced and CRUD contexts within the same system is not a design flaw — it's a design decision. The order aggregate, with its twelve state transitions and temporal query requirements, earns event sourcing. The product catalog, with its straightforward CRUD lifecycle, does not.

The logbook entry

Four patterns. Four weeks. Sixteen trade-offs. The measurement harness from the stress test produced numbers — 500–700 events before replay latency matters, 300 events before snapshots break even, 8.7 seconds to rebuild 100,000 events. Those numbers belong in your architecture decision record, alongside the decision framework scores, alongside the honest assessment of your team's readiness.

Before you close this article: score your current project on the five axes. Write down the number. If it's below 10, close the EventStoreDB documentation and open your ORM's audit trail configuration instead. The best architectural decisions are the ones where you chose not to build something.

Four patterns. Four weeks. And the most important lesson isn't about any single pattern — it's that the gap between "this is elegant" and "this earns its complexity" is where architectural maturity lives.