Trust Without Authority — The Byzantine Generals Problem in C#

Every distributed system you've ever built contains an implicit assumption about trust. This week, we make that assumption explicit — and build the cryptographic primitives that let strangers agree without trusting each other.

Every distributed system you've ever used today made a decision about trust before a single line of code was written.

Your bank's transaction system trusts a central ledger. Your company's microservices trust the message broker. The Redis clone we built earlier in the series trusts its single-threaded event loop — one process, one truth, no disagreement possible. Even the TCP protocol trusts that neither endpoint is actively lying about the data it sends.

These trust assumptions are so deeply embedded that most engineers never articulate them. They're the load-bearing walls of distributed architecture — invisible until you try to remove one.

This week, we remove one. We build a system where no participant trusts any other participant, where any node might be lying, where there is no central authority to appeal to — and we make it work anyway. The tool that makes this possible is cryptography. The theory that explains why it's hard is one of the most elegant results in computer science. And the data structure that emerges — the blockchain — is less interesting for what it's famous for than for what it teaches about the fundamental limits of distributed agreement.

The generals who can't trust their messengers

In 1982, Leslie Lamport, Robert Shostak, and Marshall Pease published a paper titled "The Byzantine Generals Problem." The setup is a thought experiment borrowed from game theory — a scenario designed to expose the structural impossibility at the heart of distributed consensus.

Several divisions of an army surround a city. Each division is commanded by a general. The generals can communicate only by sending messengers between each other. They need to agree on a common plan of action: attack or retreat. Some generals may be traitors — they may send different messages to different generals, or they may send no message at all, or they may actively try to prevent agreement.

The question: is it possible for the loyal generals to reach agreement despite the traitors?

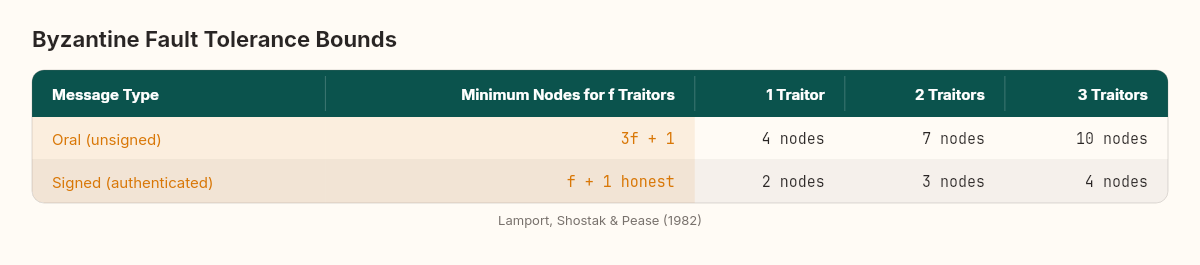

Lamport proved two things. First, with oral messages (messages that can be forged), you need at least 3f + 1 generals to tolerate f traitors. Three traitors require ten generals. One traitor requires four. If you have exactly three generals and one is a traitor, agreement is impossible — provably, mathematically impossible, not just difficult.

Second, with signed messages (messages that cannot be forged), the problem becomes solvable with any number of traitors less than the total. The signature is what changes everything. If General A signs a message, General B can verify that A actually sent it — and B can show the signed message to General C as proof. The traitor can still lie, but the lie is now detectable.

This distinction — between systems where messages can be forged and systems where they can't — is the boundary between systems that require trust and systems that don't. And it maps directly onto the architecture decisions we make every day.

// The core abstraction: a message that proves its origin

public record SignedMessage<T>(

T Content,

byte[] Signature,

byte[] PublicKey,

long Timestamp)

{

public bool Verify()

{

var contentBytes = JsonSerializer.SerializeToUtf8Bytes(Content);

var dataToVerify = contentBytes

.Concat(BitConverter.GetBytes(Timestamp))

.ToArray();

using var ecdsa = ECDsa.Create();

ecdsa.ImportSubjectPublicKeyInfo(PublicKey, out _);

return ecdsa.VerifyData(dataToVerify, Signature,

HashAlgorithmName.SHA256);

}

}This is where the series pivots. For three weeks, we built tools that assumed trust — the ORM trusted the database, the language trusted the developer, the Redis clone trusted the network. This experiment is about what happens when you can't assume trust. And the mathematics that Lamport laid out in 1982 is where we start.

Hash functions: the fingerprint that can't be faked

The first primitive we need is the cryptographic hash function. We used hashing in the Redis clone — ConcurrentDictionary uses GetHashCode() to distribute keys across buckets. But Redis's hash function optimizes for speed and distribution. A cryptographic hash function optimizes for three different properties:

Pre-image resistance. Given a hash value h, it's computationally infeasible to find any input m such that Hash(m) = h. You can't work backwards from the fingerprint to the original.

Second pre-image resistance. Given an input m₁, it's computationally infeasible to find a different input m₂ such that Hash(m₁) = Hash(m₂). You can't find a different document with the same fingerprint.

Collision resistance. It's computationally infeasible to find any pair of inputs (m₁, m₂) such that Hash(m₁) = Hash(m₂). No two documents should ever share a fingerprint, even if an adversary is deliberately trying to create a collision.

SHA-256 provides all three. In C#, using it is straightforward:

public static class Hashing

{

public static byte[] ComputeSha256(byte[] data)

{

return SHA256.HashData(data);

}

public static byte[] ComputeSha256(string text)

{

return SHA256.HashData(Encoding.UTF8.GetBytes(text));

}

public static string ToHex(byte[] hash)

{

return Convert.ToHexString(hash).ToLowerInvariant();

}

}The output is always 32 bytes — 256 bits — regardless of input size. Hash "hello" and you get 32 bytes. Hash the entire text of War and Peace and you get 32 bytes. Change a single character in War and Peace and the hash changes completely — no correlation between the two outputs, no way to predict what the new hash will be from the old one. Cryptographers call this the avalanche effect: a one-bit change in input produces, on average, a change in half the output bits.

The hash doesn't compress data. It commits to data. Once you publish a hash, you've publicly committed to the content that produced it — without revealing the content itself.

This property — commitment without disclosure — is the foundation of everything we build this week. A hash is a promise that can be verified by anyone but forged by no one.

Digital signatures: proving who said what

Hashing proves that data hasn't been tampered with. But it doesn't prove who created the data. For that, we need asymmetric cryptography — the mathematical trick that lets you prove authorship without sharing secrets.

The concept is straightforward: generate a pair of keys, one public and one private. The private key signs data. The public key verifies the signature. Anyone with the public key can confirm that the holder of the private key produced the signature — but they can't produce signatures themselves, and they can't derive the private key from the public key.

public class KeyPair

{

private readonly ECDsa _ecdsa;

public byte[] PublicKey { get; }

public byte[] PrivateKey { get; }

public KeyPair()

{

_ecdsa = ECDsa.Create(ECCurve.NamedCurves.nistP256);

PublicKey = _ecdsa.ExportSubjectPublicKeyInfo();

PrivateKey = _ecdsa.ExportPkcs8PrivateKey();

}

public byte[] Sign(byte[] data)

{

return _ecdsa.SignData(data, HashAlgorithmName.SHA256);

}

public bool Verify(byte[] data, byte[] signature)

{

return _ecdsa.VerifyData(data, signature, HashAlgorithmName.SHA256);

}

}.NET's ECDsa class implements Elliptic Curve Digital Signature Algorithm using the NIST P-256 curve — the same curve used by most TLS implementations, SSH keys, and cryptocurrency wallets. The math behind it involves point multiplication on elliptic curves over finite fields, which is beautiful but not necessary for using it correctly. What matters is the guarantee: computing a private key from a public key would require solving the Elliptic Curve Discrete Logarithm Problem, which is believed to be computationally infeasible with current hardware.

I spent years building financial transaction systems — machines that accepted coins and bills, processed payments, and reconciled ledgers across hardware and software boundaries. Every transaction needed to be attributable, verifiable, and tamper-evident. We didn't use digital signatures back then — the hardware was the trust boundary. A physical coin dropping through a sensor was its own proof of origin. But the problem was identical: how do you prove that a specific event happened, in a specific order, initiated by a specific actor, and that nobody altered the record after the fact?

The answer we built with hardware in 2013, we'll build with mathematics this week.

Merkle trees: when one hash isn't enough

A single hash verifies a single piece of data. But what if you have a thousand transactions and you want to prove that one specific transaction is included in the set — without downloading all thousand?

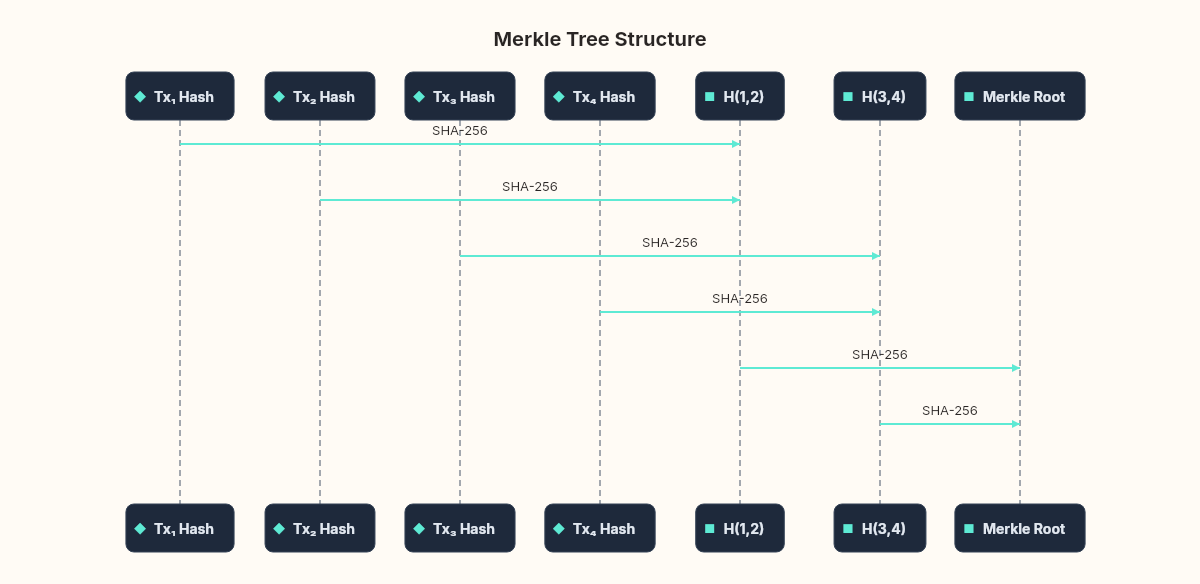

Ralph Merkle solved this in 1979 with a data structure that's now called a Merkle tree. The idea: hash each transaction individually, then pair the hashes and hash the pairs, then pair those hashes and hash them again — building a binary tree where every leaf is a transaction hash and every internal node is the hash of its two children.

public class MerkleTree

{

public byte[] Root { get; }

public MerkleTree(IReadOnlyList<byte[]> leaves)

{

if (leaves.Count == 0)

throw new ArgumentException("Cannot build tree from empty leaves.");

var currentLevel = leaves

.Select(l => SHA256.HashData(l))

.ToList();

while (currentLevel.Count > 1)

{

var nextLevel = new List<byte[]>();

for (int i = 0; i < currentLevel.Count; i += 2)

{

var left = currentLevel[i];

var right = i + 1 < currentLevel.Count

? currentLevel[i + 1]

: currentLevel[i]; // duplicate last if odd

var combined = new byte[left.Length + right.Length];

left.CopyTo(combined, 0);

right.CopyTo(combined, left.Length);

nextLevel.Add(SHA256.HashData(combined));

}

currentLevel = nextLevel;

}

Root = currentLevel[0];

}

}The root hash — a single 32-byte value — commits to every transaction in the tree. Change one transaction and the root changes. Add a transaction and the root changes. Remove a transaction and the root changes. The root is a cryptographic summary of the entire dataset.

But the real power is in inclusion proofs. To prove that transaction T₅ is in the tree, you don't need all the transactions. You need T₅'s hash, plus the sibling hashes along the path from T₅ to the root — typically log₂(n) hashes. For a tree of 1,000 transactions, that's 10 hashes. For a million transactions, 20 hashes. The verification cost grows logarithmically while the dataset grows linearly.

This is the structure that connects the individual transactions to the block header. Each block in our blockchain will contain a Merkle root that commits to every transaction in the block. If someone claims "transaction X is in block 47," you can verify that claim with 20 hashes instead of downloading the entire block.

The block: linking time to trust

Now we have the pieces. Hashes commit to data. Signatures prove authorship. Merkle trees efficiently commit to sets of data. The final structure — the block — chains these primitives together into a timeline that can't be rewritten.

public class Block

{

public int Index { get; init; }

public long Timestamp { get; init; }

public byte[] PreviousHash { get; init; } = Array.Empty<byte>();

public byte[] MerkleRoot { get; init; } = Array.Empty<byte>();

public int Nonce { get; set; }

public List<Transaction> Transactions { get; init; } = new();

public byte[] Hash => ComputeHash();

private byte[] ComputeHash()

{

var header = $"{Index}{Timestamp}" +

$"{Convert.ToHexString(PreviousHash)}" +

$"{Convert.ToHexString(MerkleRoot)}" +

$"{Nonce}";

return SHA256.HashData(Encoding.UTF8.GetBytes(header));

}

}

public record Transaction(

string From,

string To,

decimal Amount,

long Timestamp,

byte[] Signature);Each block contains a reference to the previous block's hash. This is the "chain" in blockchain — not a linked list of pointers, but a linked list of cryptographic commitments. Block 47 commits to block 46's hash. Block 46 commits to block 45's hash. If you change anything in block 45 — any transaction, any timestamp, any field — its hash changes. Block 46's PreviousHash no longer matches. Block 47's PreviousHash no longer matches block 46's new hash. The tampering cascades forward through every subsequent block.

To rewrite history, you'd need to recompute every block from the point of modification to the present. That's where proof of work — the subject of the next article — makes this computationally impossible.

Building a chain from genesis

The first block has no predecessor. It's called the genesis block — created by convention, not by mining.

public class Blockchain

{

private readonly List<Block> _chain = new();

public IReadOnlyList<Block> Chain => _chain;

public Blockchain()

{

_chain.Add(CreateGenesisBlock());

}

private static Block CreateGenesisBlock()

{

return new Block

{

Index = 0,

Timestamp = DateTimeOffset.UnixEpoch.ToUnixTimeSeconds(),

PreviousHash = new byte[32], // all zeros

MerkleRoot = SHA256.HashData("genesis"u8.ToArray()),

Nonce = 0,

Transactions = new List<Transaction>()

};

}

public Block AddBlock(List<Transaction> transactions)

{

var previousBlock = _chain[^1];

var merkleTree = new MerkleTree(

transactions.Select(tx =>

Encoding.UTF8.GetBytes($"{tx.From}{tx.To}{tx.Amount}{tx.Timestamp}"))

.ToList());

var block = new Block

{

Index = previousBlock.Index + 1,

Timestamp = DateTimeOffset.UtcNow.ToUnixTimeSeconds(),

PreviousHash = previousBlock.Hash,

MerkleRoot = merkleTree.Root,

Transactions = transactions

};

_chain.Add(block);

return block;

}

public bool IsValid()

{

for (int i = 1; i < _chain.Count; i++)

{

var current = _chain[i];

var previous = _chain[i - 1];

if (!current.PreviousHash.SequenceEqual(previous.Hash))

return false;

}

return true;

}

}The IsValid() method walks the chain and verifies that every block's PreviousHash matches the previous block's actual hash. If any block has been tampered with, the chain breaks. This is the core invariant — the property that makes the entire structure trustworthy.

What Lamport's bound means for the code we write

The Byzantine Generals result isn't just historical context. It defines the actual limits of what our code can achieve.

With oral messages — systems where nodes can claim anything without proof — you need 3f + 1 nodes to tolerate f failures. A three-node cluster can't survive even one Byzantine failure. A five-node cluster can survive one. Seven nodes, two failures.

With signed messages — our SignedMessage<T> from earlier — the bound relaxes. Any number of Byzantine failures can be tolerated as long as at least one honest node exists and messages are signed. The signature prevents the core attack: a traitor sending contradictory messages to different generals.

Our blockchain uses signed transactions, which means we're in the signed-message regime. But we still need a mechanism to decide which block is the correct next block when multiple nodes propose different blocks simultaneously. That mechanism — proof of work, proof of stake, or one of a dozen alternatives — is what transforms the data structure into a consensus protocol.

We'll build proof of work in the next article. For now, the important insight is that the cryptographic primitives we've built here aren't just tools. They're the mathematical response to Lamport's impossibility result. Hashes make tampering detectable. Signatures make forgery detectable. Merkle trees make verification efficient. The blockchain structure makes history immutable.

Lamport proved that without signatures, consensus among three nodes is impossible with even one traitor. With signatures, our chain of hashes becomes a chain of unforgeable commitments — each block a signed message that every honest node can independently verify.

These three primitives — hash, signature, Merkle tree — appear in every system that operates without central trust. TLS certificates use them. Git uses them (every commit is a hash of its content plus its parent's hash — Git is a blockchain, structurally). Package managers use them. Certificate transparency logs use them. The blockchain is the most famous application, but the primitives predate it by decades and will outlast any specific cryptocurrency.

What this week builds

We've spent three weeks building systems that assumed trust — and those systems worked because the trust assumptions were appropriate. An ORM trusts the database. A language trusts the developer. A key-value store trusts its single process. Those are reasonable assumptions for the domains they serve.

This week's experiment asks: what do you build when those assumptions fail? When the database might lie? When the other nodes might be adversaries? When there's no single process to trust?

The answer starts with the primitives we built here. Next, we add proof of work — the mechanism that makes rewriting history computationally expensive. Then we chain blocks into a ledger that multiple nodes can independently verify. After that, we simulate a network of nodes that reach consensus despite Byzantine participants. And finally, we measure the actual energy and compute cost of everything we've built.

The Byzantine Generals Problem is forty-four years old. The primitives we used today — SHA-256, ECDSA, Merkle trees — are between twenty-five and forty-seven years old. The insight they encode is older still: in any system with adversarial participants, you cannot substitute trust for proof. You can only substitute expensive proof for cheap trust, and the art is in knowing which price is worth paying.