What Building a Language Taught Me That Using One Never Could

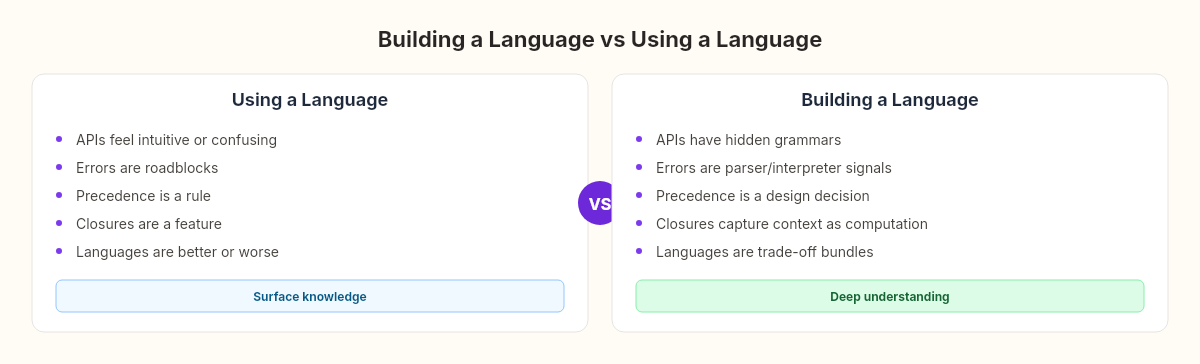

Six chapters of grammars, lexers, parsers, interpreters, and closures. The language works. But the real output isn't the code — it's what changed about how I see every language I use.

Before this week, I could hold my own in any conversation about programming languages.

I knew about compilers and interpreters. I could explain the difference between lexing and parsing. I'd read enough blog posts to be conversational about abstract syntax trees. If you'd asked me at a conference, I'd have given a reasonable answer.

Then I built one. And the conversation changed.

Five chapters. About 500 lines of C#. A grammar, a lexer, a parser, an interpreter, functions with closures. A language that can define variables, do arithmetic, branch, loop, and return values from functions that remember where they were born.

It's not much. It's not even close to what C# or Python or JavaScript does. But building it changed something about how I think about every language I touch. Not because the implementation was complex — because it was simple, and the simplicity revealed things that complexity hides.

In traditional craftsmanship, there's a practice called the masterpiece. An apprentice who has studied for years under a master must build one complete work — a cabinet, a clock, a saddle — from raw materials to finished product. Not because the world needs another cabinet. Because the act of building from nothing reveals what assembly from parts never can. You don't understand wood until you've shaped it. You don't understand joints until they've failed under your hands.

The masterpiece tradition exists because using a thing and building a thing produce different kinds of understanding.

This language was my masterpiece project for Week 2. Here are six things it taught me that no amount of using C# ever could.

1. Every API you've ever used has a grammar — you just can't see it

When I wrote the grammar for our language in the earlier chapter about formal language structure, I defined 20 rules in EBNF notation. That felt like something specific to programming languages — a formal exercise that "real" programmers don't need to think about.

But then I realized: every API has a grammar. Every fluent interface, every query builder, every configuration DSL. When you write:

builder.Services

.AddAuthentication()

.AddJwtBearer(options => { ... });You're following a grammar. AddAuthentication() must come before AddJwtBearer(). You can't call AddJwtBearer() without first calling AddAuthentication(). That ordering isn't documented as a grammar — it's encoded in types and method signatures and runtime exceptions. But it is a grammar.

The difference is that our language's grammar is explicit and visible. You can read the 20 rules and know exactly what's legal and what isn't. Most APIs hide their grammar across dozens of classes, overloads, and XML comments. When an API feels "intuitive," it's because its hidden grammar is simple. When it feels "confusing," the grammar is complex or inconsistent.

Building a grammar from scratch gave me a vocabulary for something I'd been feeling for years without being able to name it.

2. Precedence is an opinion, not a fact

In the chapter where we built the parser, operator precedence emerged not from some law of mathematics, but from the structure of our grammar. Multiplication binds tighter than addition because Factor() is called from within Term(), not the other way around. If we'd reversed them, addition would bind tighter.

This is an engineering decision, not a mathematical one. Mathematics doesn't have operator precedence — it has parentheses. The convention that 2 + 3 * 4 equals 14 (not 20) is a choice that someone made and everyone agreed to follow.

// Our grammar encodes precedence as depth:

private Expr Term() // + and - (looser)

{

var left = Factor(); // * and / (tighter)

// ...

}Every language makes these choices. Some make different ones. APL evaluates strictly right-to-left. Smalltalk has no precedence at all — everything is left-to-right, and you use parentheses to override. These aren't "wrong" — they're different opinions about the same problem.

After building a parser, I stopped thinking of operator precedence as a rule I follow and started thinking of it as a design decision someone made. That shift matters because it generalizes: every "rule" in a programming language is someone's decision. Understanding which decisions are essential and which are arbitrary helps you learn new languages faster and argue about them less.

3. What separates syntax from semantics is what separates knowing from understanding

As we explored in the chapter about building the interpreter, the parser produces a perfect structural description of a program, and the interpreter is what gives that structure meaning. A Binary(Literal(3), Plus, Literal(4)) node doesn't know that Plus means addition. The tree is just syntax. The interpreter provides the semantics.

This distinction — syntax vs semantics — is the most important thing I've internalized from this project.

I used to read error messages as annoyances. Now I see them as signals about which layer failed. A syntax error means the parser couldn't build a tree. A runtime error means the interpreter built the tree but couldn't evaluate it. A logic bug means both layers worked perfectly and the semantics of what I wrote don't match the semantics of what I meant.

Most debugging is a semantic problem disguised as a syntactic one. We stare at the code (syntax) when the real question is what the code means (semantics).

This isn't abstract philosophy. It's a practical tool. When something breaks, asking "is this a syntax problem or a semantics problem?" narrows the search space immediately.

4. Closures prove that context is computation

The functions chapter showed that a closure is a function plus its defining environment. The function increment() that closes over a count variable doesn't just carry code — it carries state. The environment is part of the computation.

// The closure captures _closure, not the current environment

var environment = new Environment(_closure);This one line changed how I think about dependency injection, about middleware pipelines, about React hooks. Every pattern that "captures context and uses it later" is a closure in disguise. When a .NET middleware captures ILogger from its constructor and uses it in InvokeAsync, that's a closure. When a React component captures state through useState, that's a closure.

The parallel with dependency injection is worth spelling out, because it's almost suspiciously exact:

// DI captures dependencies at registration time —

// this is a closure with a different name

services.AddScoped<OrderService>(sp => {

var logger = sp.GetRequiredService<ILogger>();

return new OrderService(logger);

});The factory lambda captures sp and uses it to resolve logger. This is exactly what our LoxFunction does — it creates a new Environment from the closure, binds the parameters, and executes the body. The service provider is the environment. The registration is the definition site. The resolution is the call site. DI frameworks are closures wearing enterprise clothing.

I knew this intellectually. But building the mechanism — writing the Environment class, the parent-chain lookup, the capture at definition time — made it visceral. Context isn't metadata. Context isn't documentation. Context is part of what the code does.

5. Building a tool changes how you use every tool

This is the lesson that transcends programming languages.

Before this week, when C# threw a CS1525: Invalid expression term, I saw a roadblock. Now I see a parser that failed to match a production rule at a specific token. The error message is the parser telling me exactly which grammar rule it was trying to match and which token it found instead.

Before this week, when a LINQ expression produced unexpected results, I saw a bug. Now I see an expression tree that evaluated differently than I expected — a semantic gap between what I wrote and what I meant.

Before this week, when someone said "C# is better than JavaScript" or "Python is more readable than Rust," I heard opinions. Now I hear people comparing design decisions: eager vs lazy evaluation, static vs dynamic typing, manual vs garbage-collected memory. These aren't quality judgments. They're trade-offs, and each choice has costs that the grammar and the interpreter encode silently.

Our language is ~500 lines. C# is millions. But the core architecture is the same: text goes in, tokens come out, tokens become a tree, the tree becomes behavior. Every language I will ever use follows this pipeline. Building it once, from scratch, made the pipeline visible forever.

6. Error messages are a conversation between layers

When the lexer fails, it says "unexpected character." When the parser fails, it says "expected ')' after expression." When the interpreter fails, it says "undefined variable 'x'." Each layer speaks in its own vocabulary about its own concerns. Building all three layers made error messages navigable — not because they got clearer, but because I learned which layer was talking.

This maps directly onto the errors every .NET developer sees daily. A CS1002 syntax error is Roslyn's parser saying "expected semicolon" — it built the token stream fine, but the grammar rule didn't match. A CS0103 is the semantic analyzer saying "this name doesn't exist in the current scope" — the tree was built, but the meaning couldn't be resolved. A NullReferenceException at runtime is the CLR's interpreter saying "this reference doesn't point anywhere" — syntax and semantics were both fine, but the actual state at execution time didn't match the assumption.

Before building these layers myself, all three categories felt the same: the computer is angry. After building them, each error is a specific layer reporting a specific failure in its own domain. The lexer talks about characters. The parser talks about structure. The interpreter talks about values. Knowing who is talking narrows the search before you've even looked at the line number.

What the apprentice keeps

The traditional masterpiece doesn't get sold. It doesn't go into production. It often gets displayed for the guild, examined, critiqued, and then put away. The apprentice doesn't keep the cabinet.

The apprentice keeps the understanding.

Our language won't process a single real request. It won't serve a single user. But the understanding it produced — of grammars hiding in APIs, of precedence as opinion, of syntax vs semantics, of closures as captured context, of error layers speaking different languages, of tools as frozen design decisions — that stays.

In woodworking, the masterpiece teaches you grain direction — something invisible until you've planed wood and felt the difference between with-grain and against-grain. One direction is smooth. The other tears. You can read about this in every woodworking book ever written, and it means nothing until the plane catches and the surface splinters under your hands. In language building, the equivalent insight is the syntax-semantics boundary — invisible until you've built both layers and felt the difference between "the parser accepts this" and "the interpreter can execute this." One is structure. The other is meaning. You can't feel that distinction by using a language. You feel it by building one.

What comes next is something entirely different. We leave interpreters behind and start building infrastructure. But the lens this experiment gave us — the ability to see the language underneath the language — that's permanent equipment.