What Redis Actually Does — And Why It's Not Just a Cache

Everyone knows Redis is fast. Almost nobody asks why. The answer starts with physics, passes through data structures, and ends with a question this entire week will try to answer.

What if your database lived entirely in RAM?

Not as a cache sitting in front of the real database. Not as a temporary optimization you bolt on when things get slow. As the database itself — the primary store, the source of truth, the thing your application talks to first.

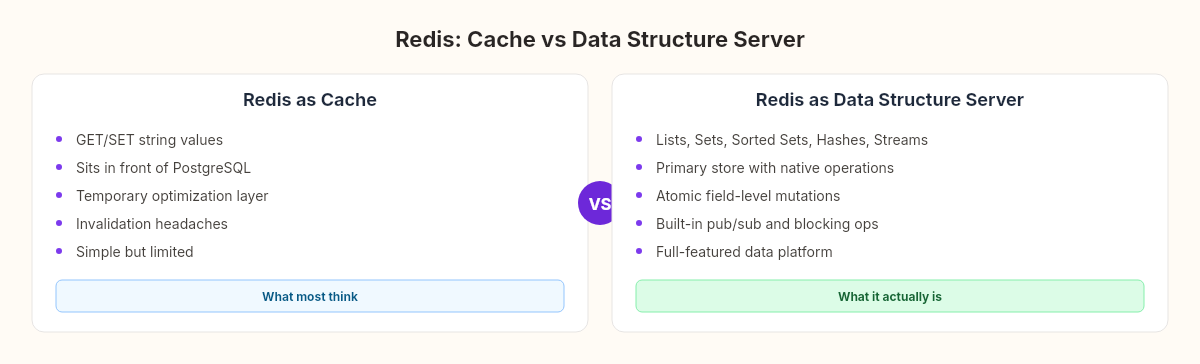

That's not a hypothetical. That's what Redis is. And the fact that most developers think of it as "that caching layer we put in front of PostgreSQL" reveals something important about how we think about speed, memory, and what a database actually needs to be.

This week in The C# Lab, we're building a Redis clone from scratch. A TCP server, a protocol parser, a key-value store, concurrent request handling, persistence. By the end of this arc, we'll benchmark our version against the real thing and measure exactly how far our implementation falls short — and why.

But before we write a line of code, we need to understand what Redis actually does. Not the API. Not the commands. The physics underneath.

The speed of forgetting

In 1946, Arthur Burks, Herman Goldstine, and John von Neumann wrote a proposal for a stored-program computer. One of their core observations was deceptively simple: the speed of computation is bounded by the speed of memory access. The processor can only think as fast as it can remember.

Eighty years later, that constraint hasn't changed. It's just gotten more layered.

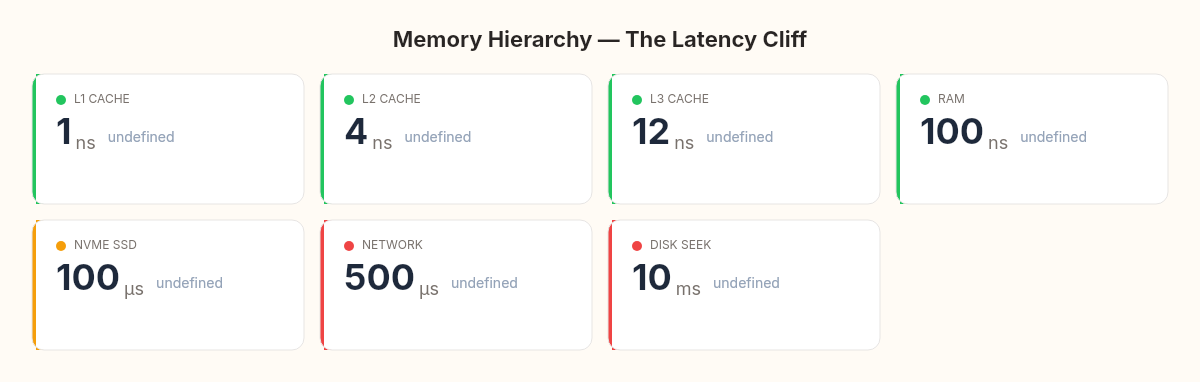

Your CPU's L1 cache can deliver data in about 1 nanosecond. L2 takes roughly 4 nanoseconds. L3, maybe 12. Main RAM — the DRAM on your motherboard — takes about 100 nanoseconds. An NVMe SSD takes around 100 microseconds. A network round-trip to a database server in the same data center takes 500 microseconds to a few milliseconds.

L1 cache: ~1 ns

L2 cache: ~4 ns

L3 cache: ~12 ns

RAM: ~100 ns

NVMe SSD: ~100,000 ns (100 μs)

Network: ~500,000 ns (0.5 ms)

Disk seek: ~10,000,000 ns (10 ms)That's not a linear progression. It's a cliff. The jump from RAM to SSD is a factor of 1,000. The jump from RAM to a network call is a factor of 5,000. The jump from RAM to a spinning disk is a factor of 100,000.

When people say Redis is fast, what they actually mean is: Redis lives on the right side of a 1,000x cliff.

A traditional database stores its data on disk and uses RAM as a buffer pool — a cache of recently accessed pages. When your query hits data that's already in the buffer pool, it's fast. When it doesn't, the database has to reach across that 1,000x cliff, read pages from disk, and copy them into memory before it can even begin processing your query.

Redis skips the cliff entirely. Every data structure, every key, every value lives in RAM at all times. There is no buffer pool miss. There is no disk read in the hot path. The question isn't "is this data cached?" — it's "is this machine powered on?"

That's not an optimization. It's a fundamentally different architecture.

Not a dictionary — a data structure server

The "Redis is a cache" mental model breaks down completely at this point.

If Redis were just a fast key-value store — a Dictionary<string, string> that lives in RAM and speaks TCP — it would be useful but unremarkable. You could build that in an afternoon with a ConcurrentDictionary and a TcpListener. We'll do exactly that in an upcoming chapter.

But Redis isn't a dictionary. It's a data structure server. Every value stored in Redis has a type, and each type supports operations that are native to that data structure.

Strings are the simplest — SET key "value", GET key. But even strings support atomic increment (INCR), bit manipulation (SETBIT), and substring operations (GETRANGE). They're closer to a byte[] with an API than a System.String.

Lists are doubly-linked lists. You can push to either end (LPUSH, RPUSH), pop from either end (LPOP, RPOP), or block until an element appears (BLPOP). That blocking pop is what makes Redis lists work as message queues — a consumer calls BLPOP and the connection sleeps until a producer pushes something. No polling. No busy-waiting.

Sets are unordered collections with O(1) membership testing. Sorted sets (ZSETs) are the same but with a floating-point score attached to each member, maintained in a skip list that gives you O(log N) insertion and O(log N + M) range queries. This is the data structure behind leaderboards, rate limiters, and time-series windows.

Hashes are maps within maps — HSET user:1001 name "Anto" age "38" stores a mini-object under a single key. Instead of serializing and deserializing a JSON blob on every access, you read and write individual fields atomically.

Streams (added in Redis 5.0) are append-only logs with consumer groups — essentially a built-in, durable message broker.

// What most people think Redis does:

var cache = new Dictionary<string, string>();

cache["user:1001"] = JsonSerializer.Serialize(user);

// What Redis actually does:

// HSET user:1001 name "Anto" age 38 city "Puglia"

// HINCRBY user:1001 login_count 1

// HGET user:1001 name

// → Each field is independently addressable and atomically mutableThe difference is not cosmetic. When your Dictionary<string, string> stores a serialized JSON blob, every update requires: deserialize → modify → reserialize → store. Four operations, with the serialization overhead dominating. When Redis stores a hash, updating a single field is one atomic operation with zero serialization.

The reason most developers underestimate Redis is that they've only ever used GET and SET. That's like using a Swiss Army knife as a screwdriver.

The physics of single-threaded speed

Here's something that surprises people who come from the .NET world: Redis processes commands on a single thread.

One thread. For everything. No ConcurrentDictionary. No lock statements. No Interlocked.CompareExchange. One thread reads commands from the network, executes them against the in-memory data structures, and writes responses back. Sequentially.

This sounds insane to anyone who's spent time optimizing .NET services with Task.WhenAll and parallel processing. But it works because of where Redis lives in the memory hierarchy.

If your bottleneck is CPU computation — parsing JSON, transforming data, running business logic — single-threaded execution is a disaster. But Redis doesn't do computation. It does memory operations. And memory operations on data that's already in CPU cache are so fast that the overhead of coordination between threads would dwarf the actual work.

Consider what a lock costs. On modern hardware, an uncontested lock in .NET takes about 20-25 nanoseconds. A contested lock — where another thread actually holds it — takes hundreds of nanoseconds to milliseconds, depending on how long you wait. A ConcurrentDictionary operation takes 50-100 nanoseconds because of its fine-grained locking and hash bucket coordination.

Redis's GET operation takes about 1 microsecond end-to-end, including network I/O. The actual memory lookup — finding the key in the hash table and returning the value — takes maybe 50-100 nanoseconds. If Redis used locks to protect that operation, the locking overhead would equal or exceed the operation itself.

// The .NET instinct: protect shared state with concurrency primitives

var dict = new ConcurrentDictionary<string, string>();

// ~50-100ns per operation (lock overhead + hash + memory)

// The Redis insight: eliminate sharing entirely

// Single thread = no contention = no synchronization overhead

// ~50-100ns for the memory operation itself

// The network I/O is the bottleneck, not the data structureThis is the same insight we encountered with closures in the chapter about functions and environments in our language project — that sometimes the simplest architecture is the fastest one, because avoiding complexity avoids the overhead of managing complexity. Redis's single thread is the ultimate closure: it captures the entire data store in one execution context and never shares it.

The contract you're signing

Trade-off thinking requires naming the price. Redis pays three.

First: memory is expensive. A server with 256 GB of RAM costs significantly more than a server with 256 GB of NVMe storage. For datasets that fit in tens of gigabytes, this is fine. For datasets that grow to terabytes, it's prohibitive. Redis Cluster can shard across machines, but each machine still stores its shard entirely in RAM.

Second: volatility. RAM is volatile. When the power goes out, everything in RAM disappears. Redis offers two persistence mechanisms — RDB snapshots and AOF (append-only file) logs — but neither is free. RDB snapshots are periodic and lose data between snapshots. AOF logs every write operation, which means disk I/O re-enters the picture in the write path. A later chapter on persistence will explore exactly how much this costs.

Third: single-thread limits. While a single Redis instance can handle 100,000+ operations per second on typical hardware, it can't scale vertically beyond what one CPU core can deliver. Horizontal scaling via Redis Cluster adds operational complexity — hash slots, resharding, cross-slot transaction limitations.

These aren't weaknesses. They're the terms of a contract. RAM gives you speed; you pay with cost and volatility. Single-threaded execution gives you simplicity; you pay with a per-core throughput ceiling. Every architectural decision in Redis reflects this contract.

Why we're building one

The point of this week isn't to build a production Redis replacement. Antirez's redis-server is one of the most carefully optimized C programs ever written. Our C# version will be slower by an order of magnitude — maybe more.

The point is the same point it's been every week in The C# Lab: building reveals what using conceals.

When you call SET key value EX 60 in a Redis client library, you're invoking a protocol that serializes your command into RESP format, sends it over a TCP connection, gets parsed by the server, dispatched to a command handler, executed against an in-memory data structure, and responded to with a RESP-formatted acknowledgment. That entire pipeline is invisible when you use a client library. Building it makes every layer visible.

The RESP protocol parser we'll write should feel familiar if you followed the lexer we built in the chapter about tokenizing source code — it's the same fundamental operation. Scanning a stream of bytes. Recognizing patterns. Producing structured output. The source language changed from our custom grammar to the Redis protocol. The architecture didn't.

The command dispatcher will feel like a simpler version of the interpreter we built in the chapter about walking the AST — pattern-matching on a command type and executing the appropriate operation. GET maps to a dictionary lookup. SET maps to a dictionary write. DEL maps to a remove. The parallels aren't metaphorical. They're structural.

The question underneath

There's a question that lives underneath every in-memory system, and it's the question that will drive this entire week:

How do you make something fast and durable at the same time?

Speed comes from RAM. Durability comes from disk. RAM is 1,000 times faster than disk. Any persistence mechanism introduces the very latency that the in-memory architecture was designed to eliminate.

Redis's answer is nuanced — snapshot periodically, log continuously, accept that some data loss is possible in a crash, and let the operator choose their position on the speed-durability spectrum. It's not a solution. It's a set of trade-offs with explicit knobs.

Our answer, by the end of this week, will be our own. We'll build both mechanisms — append-only logging and point-in-time snapshots — and measure exactly what each one costs. Then we'll benchmark the whole thing against real Redis and see where 500 lines of C# falls short of 100,000 lines of C.

The gap will be humbling. But the gap is where the learning lives.